Anthropic has developed what may be the most capable vulnerability detection model ever built. They're calling it Mythos, and instead of releasing it to the public, they're doing something unusual: keeping it under lock and key while partnering directly with critical infrastructure providers to put it to work.

The reasoning is straightforward. A model that excels at finding bugs in code is, by definition, a model that excels at finding exploits. In the wrong hands, Mythos could accelerate the discovery of zero-days in banking systems, power grids, medical devices, and the kind of software that keeps modern civilization functioning. Anthropic looked at that reality and made a call that few AI companies have been willing to make: capability alone isn't sufficient justification for deployment.

Project Glasswing

The initiative Anthropic is launching alongside this announcement is called Project Glasswing. Rather than democratizing access to Mythos, the company is forming partnerships with major software providers whose systems underpin critical infrastructure. These partners will receive controlled access to the model, allowing them to audit their own codebases for vulnerabilities before attackers can find them.

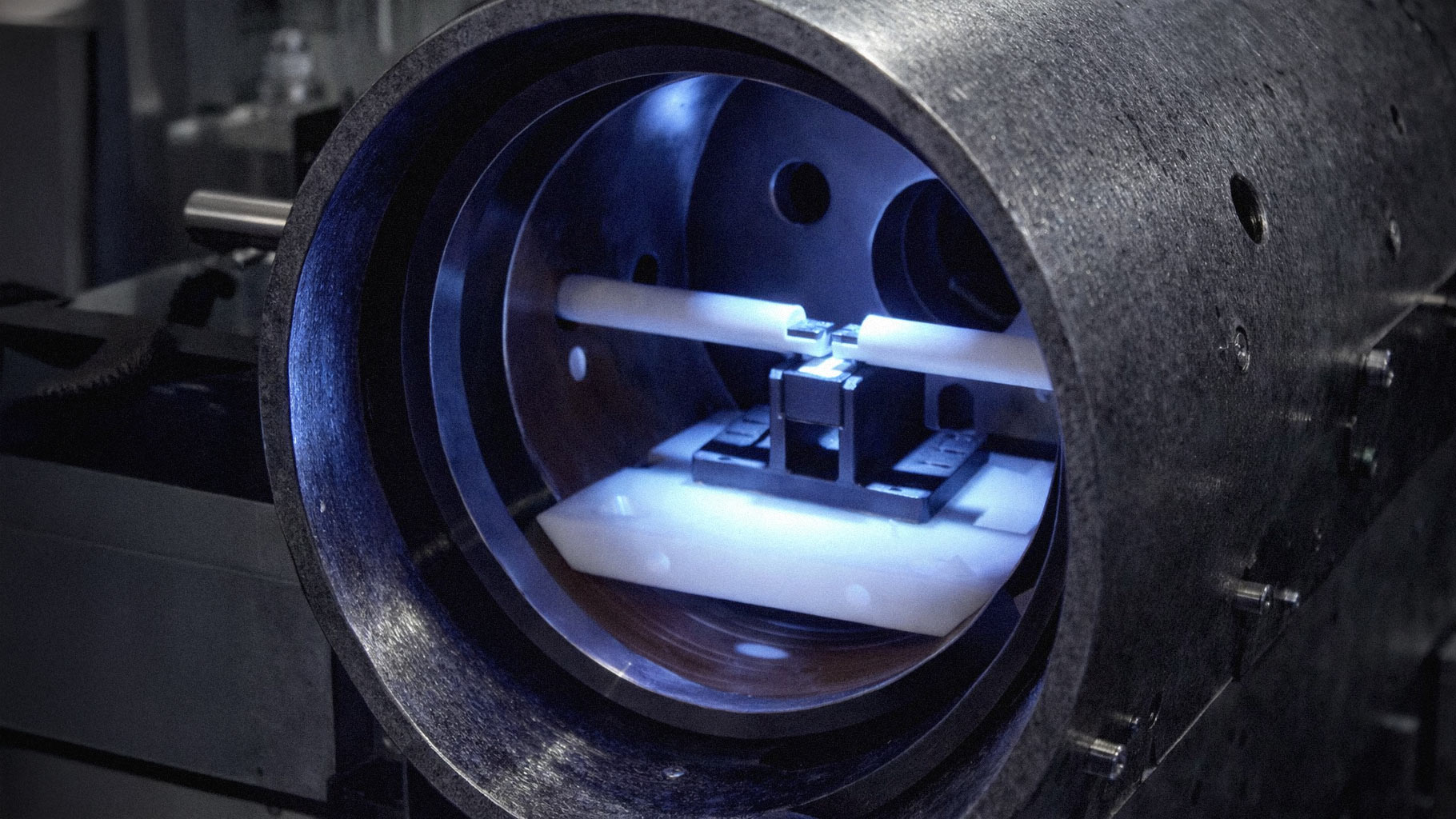

The list of initial partners hasn't been fully disclosed, but Anthropic has indicated that healthcare systems, financial institutions, and energy sector software vendors are among the first wave. The model will operate within sandboxed environments, with Anthropic maintaining oversight of how it's deployed and what it's used to analyze.

Why This Matters

The AI industry has spent years operating under a tacit assumption: if a capability exists, it should be released. The competitive pressure to ship has overridden caution more times than anyone wants to count. OpenAI, Google, Meta, and others have all faced criticism for releasing systems whose risks weren't fully understood. Anthropic itself has emphasized responsible deployment in the past, but Glasswing represents something more concrete than rhetoric.

This is Anthropic putting real constraints on a commercially valuable asset. Mythos could generate significant revenue if offered as a service to developers or security firms. Instead, the company is absorbing the opportunity cost in exchange for a more controlled rollout. That's not nothing.

The security implications are significant. Software vulnerabilities remain one of the primary vectors for cyberattacks, and the asymmetry between attackers and defenders has been widening. Offensive security research has benefited enormously from AI-assisted tooling, while defensive teams struggle to keep pace. A model like Mythos, deployed strategically on the defensive side, could begin to rebalance that equation.

The Precedent

What Anthropic is doing here sets a precedent that other AI labs will have to reckon with. The next time a frontier lab develops a capability with obvious dual-use potential, Glasswing will be the benchmark against which their response is measured. Did they consider restricting access? Did they partner with affected industries? Did they think beyond quarterly revenue?

There's a version of this story where Anthropic's caution looks naive in retrospect. Maybe a competitor develops something similar and releases it anyway. Maybe the vulnerabilities Mythos finds get patched so quickly that the threat model never materializes. But there's also a version where this approach becomes the template for addressing the security crisis that AI is about to accelerate.

For now, Anthropic has built something powerful and chosen restraint over release. In an industry that rarely exercises that muscle, it's worth noting when someone does.