Google just released Gemma 4, a family of open-source AI models designed to run on the hardware most people already own. The flagship model, Gemma 4 27B, operates on a single NVIDIA 4090 graphics card. The smaller variants go further: Gemma 4 1B runs on smartphones. No cloud connection required. No data leaving your device.

This matters more than most announcements in the AI space. For years, the assumption has been that useful AI requires massive data centers, expensive hardware, and constant internet connectivity. Gemma 4 challenges that assumption directly.

What's Actually in the Box

The Gemma 4 family spans multiple sizes. The 27B parameter model handles complex reasoning and multimodal tasks while fitting on hardware that costs around $1,600. The 9B and 4B variants target laptops and edge devices. The 1B model is explicitly built for phones.

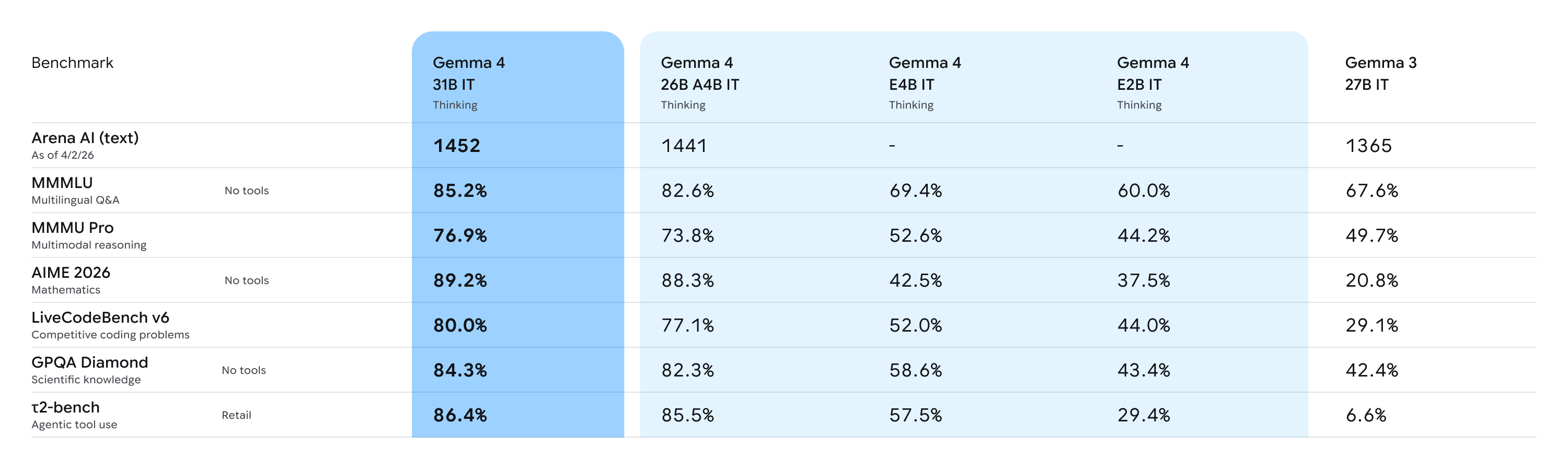

Google claims the 27B model competes with GPT-4.1 and Claude Sonnet 4 on standard benchmarks. Whether that holds up in practice remains to be seen, but the benchmark numbers are credible enough to take seriously. More importantly, the models support what Google calls "thinking mode," a form of extended reasoning that improves performance on harder problems.

The multimodal capabilities deserve attention. These models process images, video, and audio alongside text. They support function calling and structured outputs, which means developers can build applications that interact with other software and APIs. The context window extends to 128,000 tokens.

Privacy Changes When Computation Stays Local

The privacy implications are significant. Current AI assistants route your queries through remote servers. Your questions, your documents, your voice recordings all travel to data centers where they become someone else's problem to secure and someone else's opportunity to analyze.

Local inference eliminates that exposure. A model running entirely on your phone can process your medical questions, your financial documents, your personal correspondence without any of it leaving the device. This shifts the trust model fundamentally. You no longer need to trust a company's privacy policy. The data simply never goes anywhere.

For professionals handling sensitive information, this opens possibilities that cloud-based AI cannot match. Lawyers reviewing privileged documents. Doctors considering patient histories. Journalists protecting sources. The use cases multiply quickly once the data stays local.

Offline Intelligence Is the Bigger Story

But the offline capability might matter even more than the privacy angle. Consider what happens when AI assistance stops depending on connectivity.

Rural areas with unreliable internet gain access to the same tools as urban centers. Aircraft passengers get useful AI throughout their flights. Field researchers in remote locations can run sophisticated analysis without satellite uplinks. Emergency responders maintain capability when networks fail.

The hunger for reliable utility in technology finds an answer here. A phone that can summarize documents, translate languages, analyze images, and answer questions without any connection represents a different kind of tool than what we have now.

This also changes the economics of AI deployment. Cloud inference is expensive, and those costs get passed to users or absorbed through advertising and data collection. Local inference shifts the cost to a one-time hardware purchase that most people have already made.

What Developers Will Build

The release includes tools that matter for adoption. Google's AI Edge SDK supports Android, iOS, web, and desktop platforms. The Gemma Evaluator lets developers test model quality systematically. Integration with LangChain, Ollama, and vLLM means existing workflows can incorporate these models without starting from scratch.

Expect applications that would have been impractical before. Personal assistants that learn your patterns without uploading your behavior to servers. Creative tools that process your photos and videos locally. Educational software that adapts to students without surveillance. Health applications that analyze symptoms without liability concerns about data handling.

The 1B model's phone compatibility particularly matters because smartphones are the primary computing device for most of the world. Billions of people with limited infrastructure access might soon carry genuinely capable AI in their pockets.

The Competitive Pressure

Google released these models under permissive licensing. The weights are available on Hugging Face and Kaggle. Developers can use them commercially without the restrictions that limit some open-source alternatives.

This forces responses from competitors. Apple's on-device AI efforts suddenly face a credible open-source alternative. Meta's Llama models compete for the same developer attention. The Chinese labs working on efficient models must recalibrate their positioning.

The era of useful AI requiring expensive cloud connections is ending. The transition will take time, and cloud models will remain dominant for the most demanding tasks. But the floor has risen. Capable AI assistance is becoming a feature of the devices we already own rather than a service we rent from distant servers.