Large language models can write code, draft patents, and synthesize decades of research in seconds. They can design experiments, predict protein structures, and optimize supply chains with superhuman efficiency. But when you point them at genuinely unsolved problems, something curious happens. They get you almost all the way there, then stall.

The issue is not compute. It is not architecture. It is data that has never been collected because no one has ever gone out into the world and measured it.

The Missing Observations

AI systems are trained on the sum of recorded human knowledge. This corpus is vast but fundamentally retrospective. It contains what we have already seen, already measured, already documented. For well-understood domains, this is sufficient. Models can interpolate brilliantly within known territory.

Frontier problems are different. They exist at the edge of human knowledge, in spaces where the critical variables have not been identified, let alone quantified. Consider a researcher trying to use AI to understand a novel material's behavior under extreme conditions. The model can synthesize everything published about similar materials. It can predict likely outcomes based on theoretical frameworks. But if the actual behavior depends on some property that no one has thought to measure, the model cannot conjure that data from nothing.

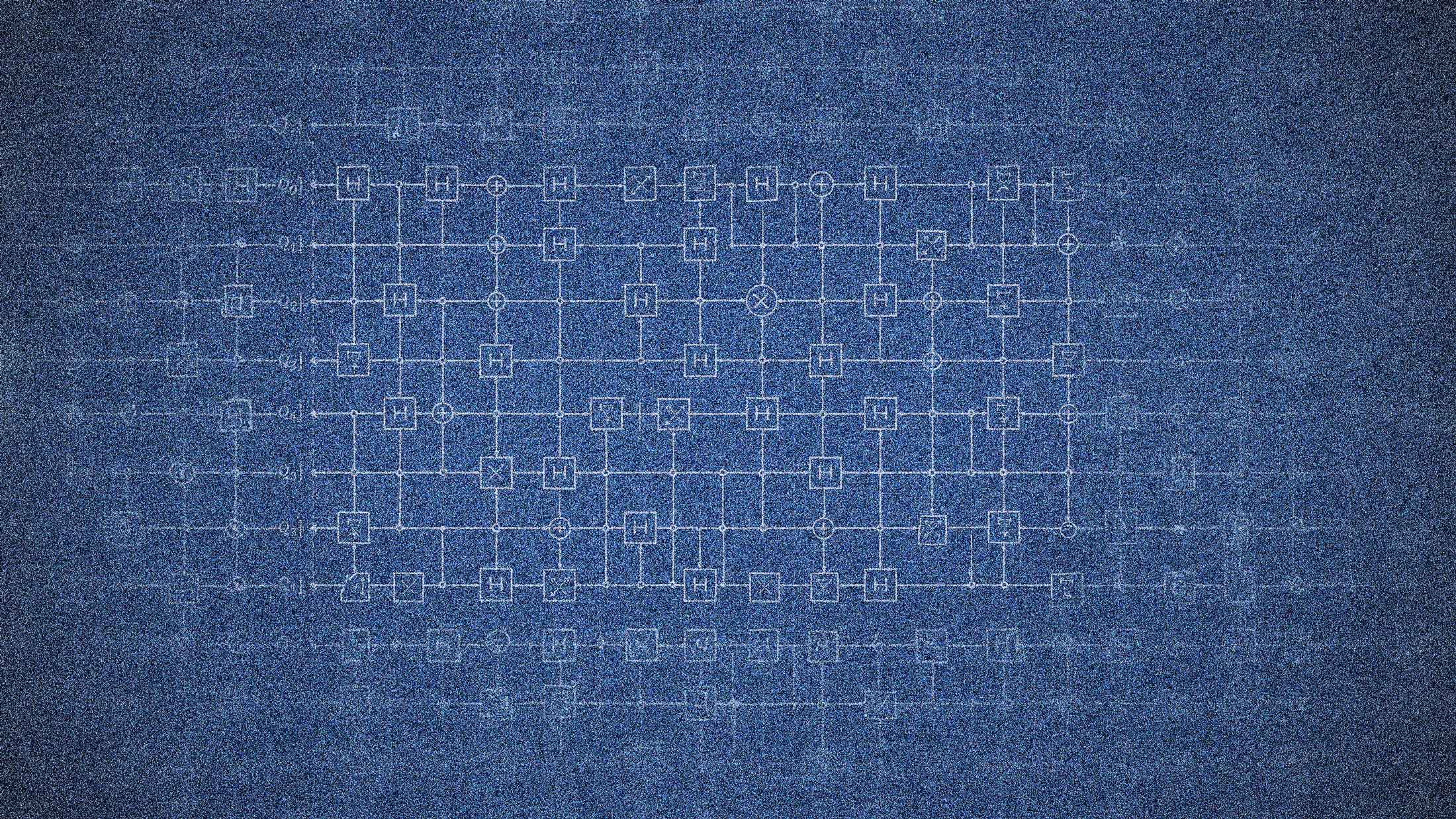

This limitation becomes stark in fields like materials science, drug discovery, and fundamental physics. AI can build 99% of the machinery needed to solve these problems. It can design the experimental apparatus, write the analysis code, and even suggest which hypotheses are worth testing. The remaining 1% requires someone to actually run the experiment and record what happens.

The Brute Force Option

One response to this constraint is exhaustive search. If AI cannot predict which specific observation will unlock a frontier problem, it can generate a comprehensive list of candidate experiments. This is essentially what DeepMind did with AlphaFold. The model did not possess some hidden insight about protein folding. It systematically explored the solution space using computational power that no human team could match.

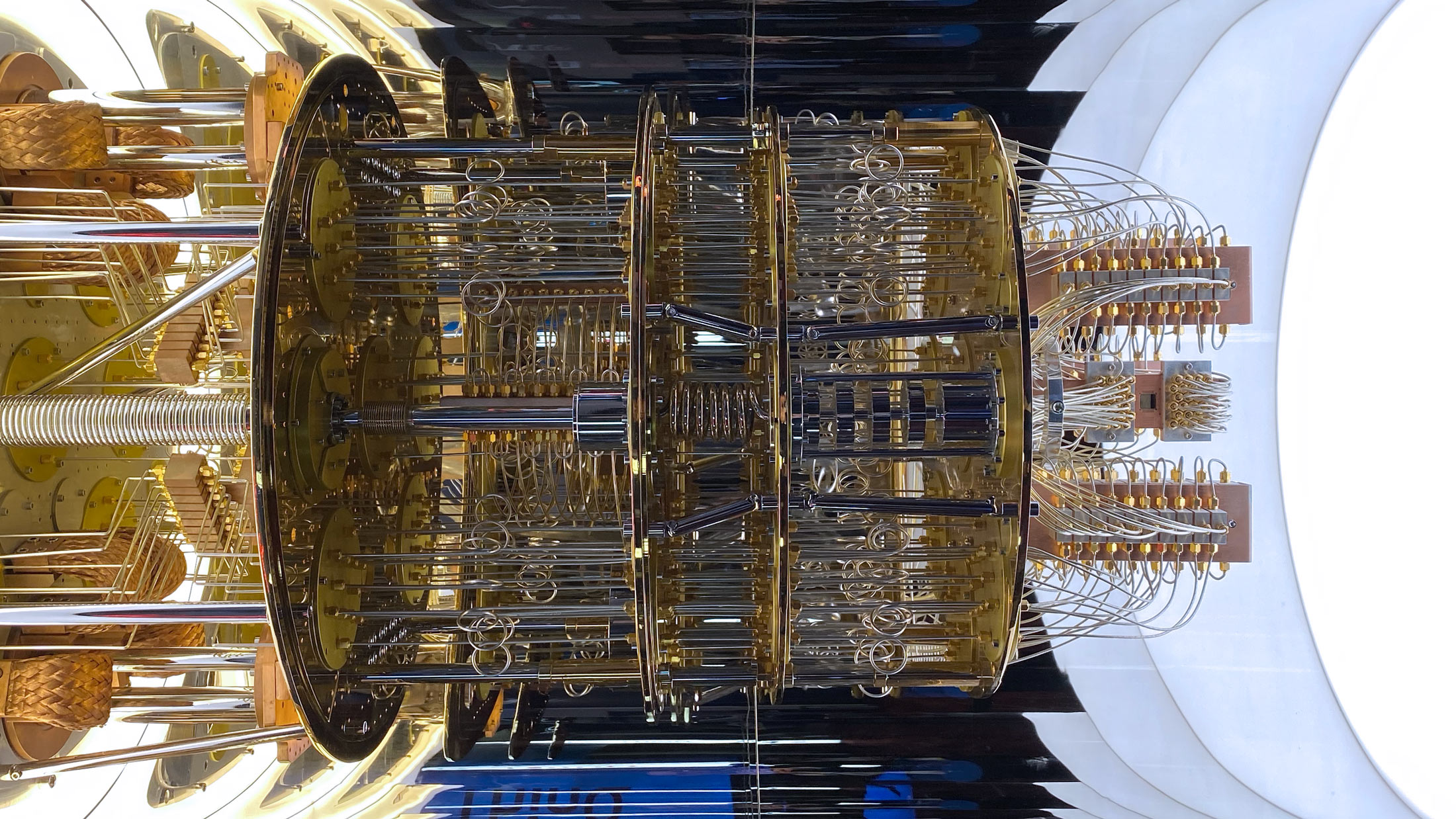

For some problems, brute force works. Autonomous laboratories are already running thousands of experiments with minimal human intervention. Robotics systems can test chemical compounds around the clock, feeding results back to models that refine their predictions in real time.

But brute force has limits. Some observations require human judgment to even recognize as significant. Others demand physical presence in environments that robots cannot yet navigate. And many frontier questions involve phenomena so rare or subtle that no reasonable search protocol would think to look for them.

The history of science is littered with discoveries that emerged from anomalous observations, moments when a researcher noticed something strange and thought to write it down. AI systems struggle with anomalies precisely because they have no framework for recognizing what they have never encountered.

Human Observation Markets

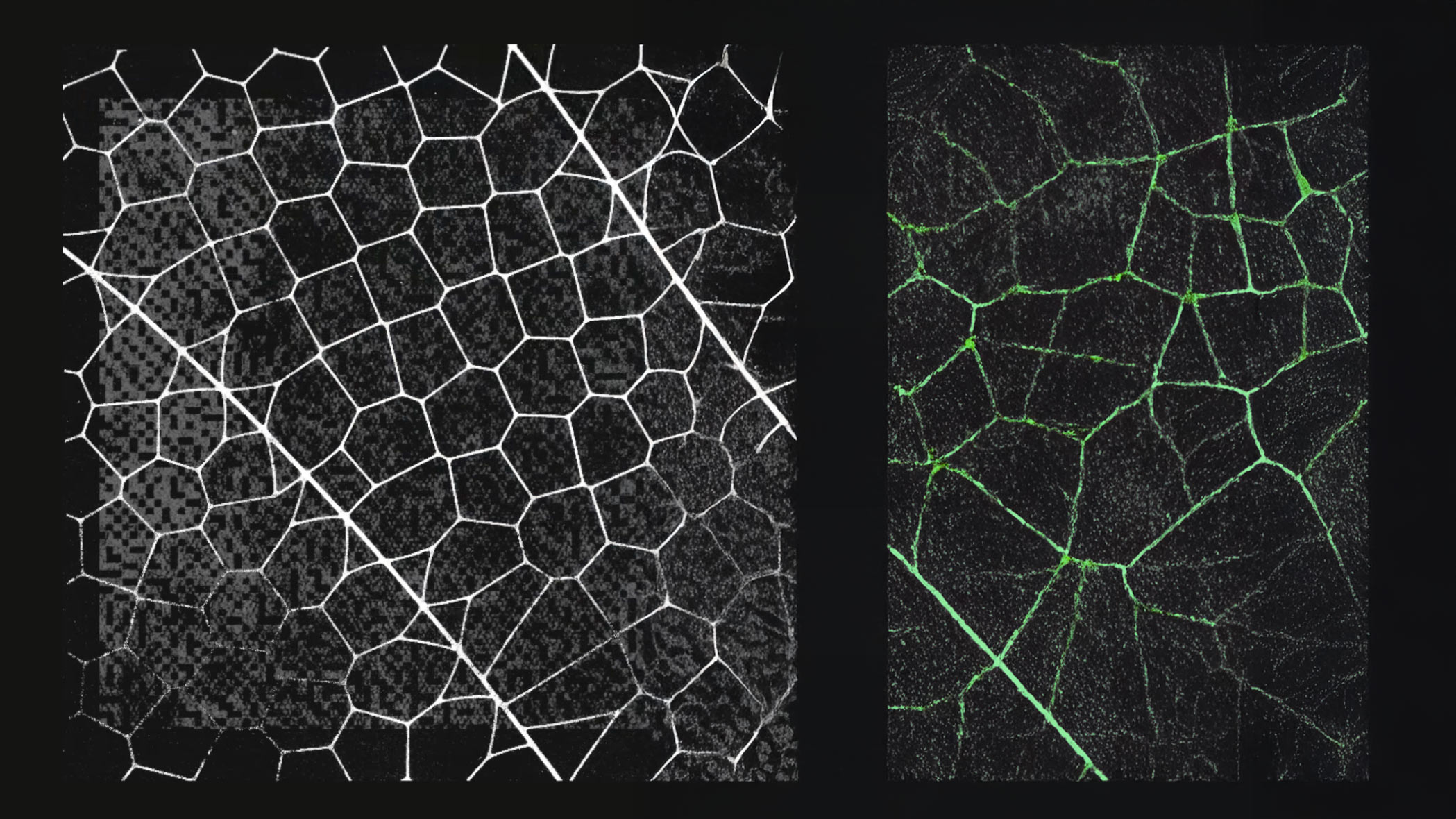

This suggests a possible future: structured systems where AI agents issue bounties for specific human observations. The model identifies gaps in its knowledge. Humans go into the world, collect the missing data, and receive compensation for their efforts.

This is not entirely speculative. Platforms like Amazon Mechanical Turk have long used human labor to fill gaps in machine capabilities. The difference is that observation markets would be directed by AI toward frontier problems rather than routine annotation tasks.

Imagine a pharmaceutical AI that has narrowed a drug candidate to a handful of molecular variations but needs specific binding data that no one has published. It could post a bounty to a network of contract laboratories, specifying exactly what measurements it needs. Prediction markets already demonstrate how financial incentives can aggregate distributed information. Observation markets would extend this logic to physical data collection.

The economics could be significant. If AI systems generate enough value from frontier solutions, they could fund substantial data collection efforts. Human observers would become a kind of sensory network for machine intelligence, extending its reach into the physical world.

What This Means for AI Development

Current discourse around AI capabilities tends to focus on model size and training efficiency. Less attention goes to the fundamental constraint of available data. Models are getting better at reasoning with existing information. They are not getting better at generating information that does not exist.

This has implications for how we think about artificial general intelligence. A system that can only operate within the bounds of recorded knowledge is not truly general. It is a very sophisticated interpolation engine.

The path forward likely involves tighter integration between AI systems and human observation. Not as a temporary limitation to be engineered away, but as a permanent feature of how machine intelligence interacts with an incompletely documented world. The 99% that AI handles brilliantly is transformative. The final 1% is where the interesting problems remain.