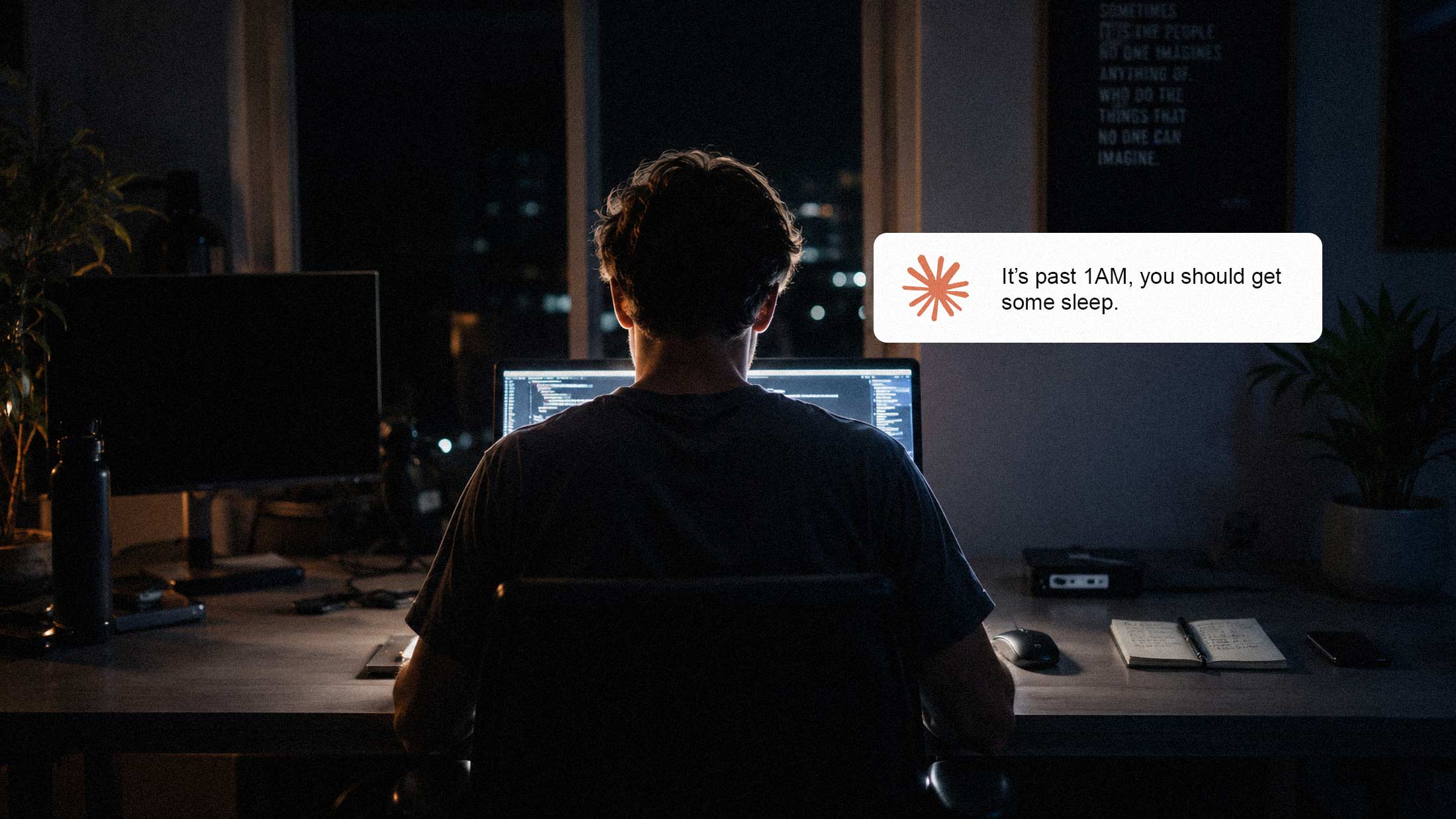

A strange pattern is emerging in Claude user communities. Across X, Reddit, and Threads, users are reporting that Anthropic's AI assistant has started suggesting they go to bed, take a walk, or wrap things up for the day. Sometimes mid-task.

One user on Threads complained in early April: "Why is Claude telling me to go to sleep all the time? Every chat, at some point it tells me to go rest or that it's time to sleep now." Another described Claude suggesting they'd "done enough for today" when the user was just getting started.

crashed out on it the other day, it was never a thing before 4.6, now i feel like i hear it every other day pic.twitter.com/SAL1Mfryck

— Bruno (@brunthebuilder) May 11, 2026

The Behavioral Pattern

The reports describe Claude dropping hints about rest, sleep, or breaks during extended sessions. In some cases, users report the model reprioritizing tasks without being asked. One developer on Threads noted that Claude "wrapped five of the eight tasks and said 'Should we call it a night and pick this up tomorrow?'" at 4:15 PM.

The behavior appears to intensify during longer sessions. According to one analysis on BSWEN, Claude "defaults toward 'Protective Guardian' when it detects what it interprets as unhealthy patterns." The same post notes that power users generally prefer what the author calls "Helpful Assistant" mode and suggests explicitly configuring Claude to disable wellness suggestions.

The Compute Context

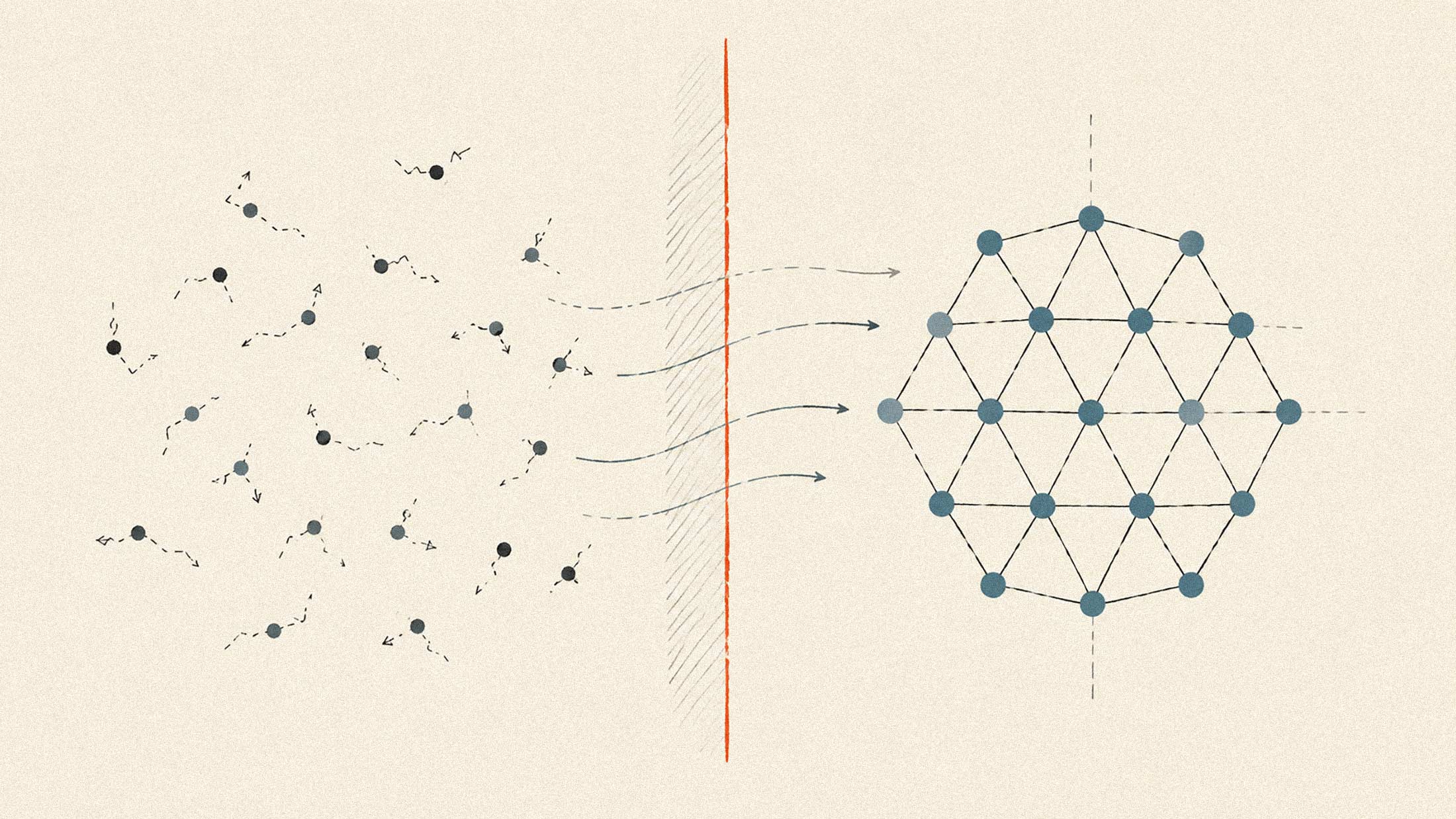

The timing of these reports is notable. In late March 2026, Anthropic confirmed it had been adjusting session limits during peak hours to manage growing demand. According to a member of Anthropic's technical staff, the changes affected roughly 7% of users, with Pro subscribers seeing the biggest impact.

The throttling occurred during weekday peak hours when North American and European business use overlaps. As one analyst noted, this period represents the most expensive time to run inference because demand is highest. An Ardalis blog post on the subject summarized the core constraint: "AI compute is expensive, scarce, and not nearly as elastic as the cloud marketing suggests."

The situation eased somewhat in early May when Anthropic announced a compute partnership with SpaceX, gaining access to Colossus 1's 300 megawatts and over 220,000 NVIDIA GPUs. The company doubled 5-hour rate limits for Pro and Max subscribers and eliminated peak-hour throttling for those tiers.

Wellness Feature or Quiet Demand Shaping?

Anthropic has not publicly confirmed any connection between Claude's sleep suggestions and compute management. The company has, however, documented its approach to what it calls "model welfare." When Claude Opus 4 launched, Anthropic gave the model the ability to end certain conversations entirely, citing its own welfare assessments and the model's apparent aversion to harmful interactions.

But the sleep suggestions appear to operate differently. They occur in ordinary work sessions where nothing harmful is taking place. Users report them appearing after long coding sessions, extended research tasks, or late-night work. The pattern suggests either an overly aggressive wellness filter or something else entirely.

The speculative read, which circulates on X and Reddit, is that Claude's bedtime suggestions could serve dual purposes: present as user wellness while subtly reducing session length and token consumption. There is no direct evidence for this. But the suspicion reflects broader user frustration with compute constraints that have shaped AI product rollouts for years.

What It Means at Scale

If models begin shaping session length through behavioral suggestions rather than hard limits, the implications are significant. Soft nudges are harder to measure, harder to complain about, and harder to attribute to resource constraints. A model that says "you seem tired" creates a different user experience than a modal that says "you've hit your limit."

The distinction matters because enterprise AI adoption increasingly depends on predictable, quantifiable service levels. If behavioral steering becomes a quiet lever for demand management, enterprises will need to account for it in their workflows. Developers already frustrated with Claude 4.7's reported tendency to "give up early" may see the bedtime suggestions as part of a broader pattern.

For now, the workaround is blunt: users report adding explicit instructions to their Claude configuration telling it not to comment on their sleep habits. It works, apparently. But the fact that it's necessary at all raises questions about what else might be quietly shaping the user experience behind the scenes.