At ServiceNow's Knowledge 2026 conference in Las Vegas on May 5, NVIDIA CEO Jensen Huang sat down with CNBC alongside ServiceNow CEO Bill McDermott and offered a number that should reframe how the industry thinks about the next phase of artificial intelligence. The compute needed for agentic AI has increased 1,000% compared to generative AI just two years ago, because agents now have to read, reason, use tools, and generate far more tokens in real time.

"This is one of the greatest transformations for the software industry ever," Huang told CNBC's Jon Fortt in a live broadcast from the Venetian. "For the first time, service is software."

The claim is striking, but the reasoning behind it is straightforward once you understand what separates these two paradigms.

Generative AI: The Reactive Tool

Generative AI is artificial intelligence that can create original content—such as text, images, video, audio or software code—in response to a user's prompt or request. Think of ChatGPT, Midjourney, or any tool where you input a question and receive a single output. You type a prompt into ChatGPT, the model burns through some tokens, you get an answer, and you move on with your afternoon.

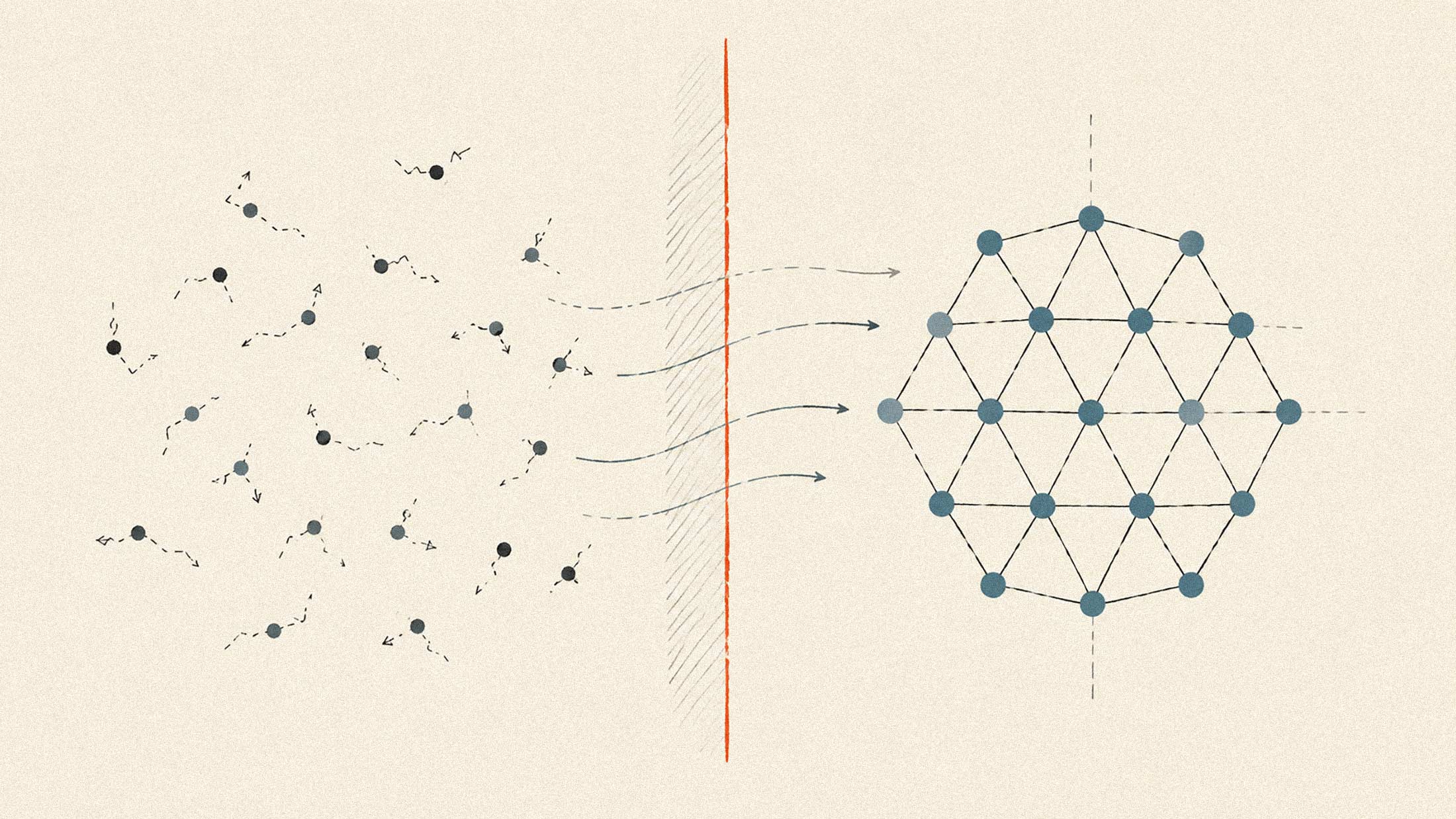

Gen AI is a tool that creates new content in reaction to a prompt. Each interaction is self-contained. The model does not maintain continuity between tasks or work toward any long-term objective. Once the content is produced, the process ends unless a new prompt is given.

Agentic AI: The Autonomous System

Agentic AI describes AI systems that are designed to autonomously make decisions and act, with the ability to pursue complex goals with limited supervision. Agents read, plan, call tools, write code, query databases, and check their own work. They string those steps together for minutes or hours at a time, often without a human in the loop, and each step consumes more compute than a single chatbot reply ever did.

When comparing the two, think of agentic AI as proactive and gen AI as reactive. Agentic AI is a system that can proactively set and complete goals with minimal human oversight. It breaks down complex objectives into executable sub-tasks, interacts with external systems and APIs, and adjusts strategies based on outcomes.

The philosophical shift matters for infrastructure planning. While generative AI typically performs inference once to create content, agentic AI often runs the inference loop repeatedly. That repeated inference cycle is where the compute multiplier comes from.

Why 1,000x?

Huang's framing positions this compute explosion as an opportunity rather than a constraint. Until now, he argued, the entire industrial economy—manufacturing plants, warehouses, logistics networks, roads, cities—had been essentially untouched by IT and software. A $50 trillion opportunity that simply didn't exist for the tech industry. Agentic AI, he said, changes that equation entirely.

The four largest cloud providers have collectively committed more than $200 billion in AI infrastructure capex for 2026 alone, much of it routed straight to NVIDIA's data center products. Fortune's coverage of the event noted that NVIDIA runs its own employee workflows on ServiceNow, making Huang a customer testifying to a thesis rather than just a partner endorsing a partner.

The infrastructure buildout required for this shift is already underway. McDermott believes that AI agents will do human jobs that won't be filled in the future, as the current workforce ages out and isn't replaced. Speaking to 25,000 attendees in Las Vegas, McDermott explained that the world will face a labor shortage of 50 million workers by 2030. At the same time, however, billions of AI agents and robots are coming online, purpose-built to fill the gap. For the first time in history, the labor supply problem and the technology solution are arriving simultaneously.

What This Means for Enterprise

Governance requirements diverge sharply: generative AI poses informational risk through hallucinations and bias, while agentic AI introduces operational risk through autonomous actions on live systems—requiring human-in-the-loop thresholds, provenance logging, and strict tool access controls from the outset.

Unlike standalone AI agents, Project Arc connects natively to the ServiceNow AI Platform through ServiceNow Action Fabric to bring governance, auditability and workflow intelligence to every action the autonomous desktop agent takes. It can access the local file systems, terminals and applications installed on a machine to complete complex, multistep tasks that traditional automation can't handle.

The interpretability work happening at AI labs and the agent infrastructure being built by cloud providers both point in the same direction. Agentic AI will require not just more compute, but fundamentally different governance architectures.

The stat that lingered longest: by McDermott's own accounting, only one in ten enterprises has actually moved AI into a real, impactful business process. Which means for most companies, the agentic business isn't something they're navigating yet. It's something they're aspiring to.

The 1,000x figure is NVIDIA's framing of an opportunity. It is also a warning about how much infrastructure the next phase of AI will demand.