Satya Nadella announced on X today that Microsoft's Fairwater AI datacenter in Wisconsin is now operational, arriving ahead of its projected timeline. The facility, first unveiled in September 2025 as an ambitious bet on centralized AI infrastructure, represents the largest single deployment of NVIDIA GB200 GPUs ever assembled.

The numbers are difficult to contextualize. Hundreds of thousands of GB200 chips, clustered into what Microsoft describes as a single seamless system, deliver roughly 10 times the performance of the current fastest supercomputer for large-scale AI training and inference workloads. That comparison point would be Frontier at Oak Ridge National Laboratory, which topped the TOP500 list and became the first exascale machine in 2022.

Architecture Built for Scale

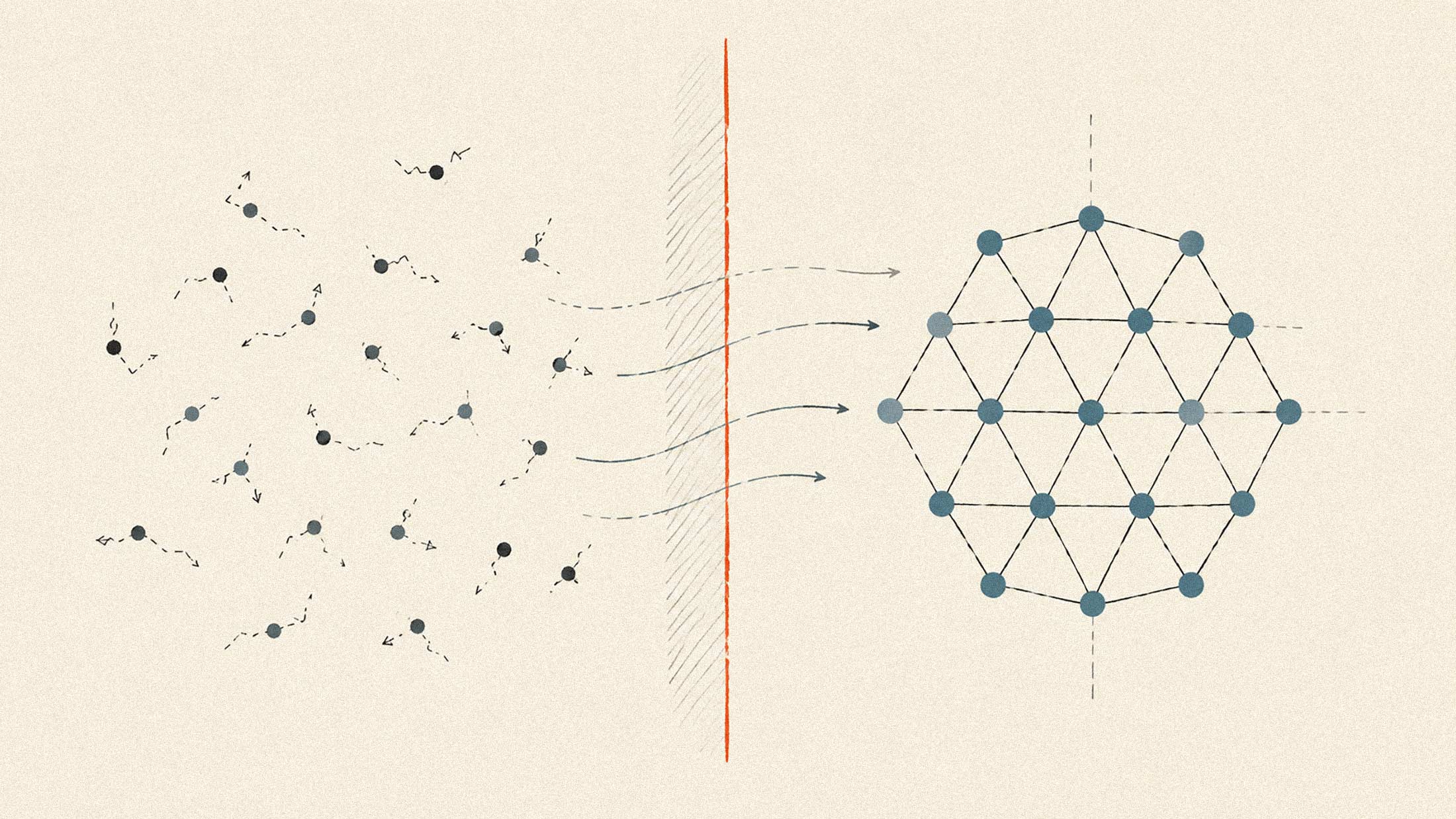

What distinguishes Fairwater from conventional hyperscale deployments is the integrated design philosophy. Rather than treating the facility as a collection of independent server racks, Microsoft engineered it as one continuous computational fabric. The GB200 GPUs communicate through high-bandwidth interconnects that eliminate the latency penalties typically associated with distributed training across separate clusters.

This matters for the kinds of models Microsoft and OpenAI are building. Training runs for frontier AI systems can take months and cost hundreds of millions of dollars. Every percentage point of efficiency gained at the hardware level compounds across those timelines. The architecture also positions Microsoft to handle inference workloads at a scale that would overwhelm fragmented infrastructure.

The Cooling Problem, Solved Differently

Datacenters have traditionally been water-intensive operations. The industry has faced increasing scrutiny as AI's electricity demands have surged, with communities raising concerns about competition for local water resources. Fairwater takes a different approach: closed-loop liquid cooling with zero operational water use.

The system circulates coolant directly to the chips, capturing heat at the source before it spreads through the facility. That heat is then dissipated through ambient air exchange rather than evaporative cooling towers. Microsoft claims this eliminates the millions of gallons of water that a facility of this scale would typically consume annually.

The renewable energy matching component is equally notable. Microsoft has committed to sourcing 100 percent of Fairwater's electricity from renewable sources, though the specifics of how that matching works in practice will matter. The nuclear and renewable investments that major tech companies have announced over the past year suggest the industry recognizes that AI's growth trajectory will eventually collide with grid capacity constraints.

What This Means for the GPU Race

The Fairwater deployment underscores how far ahead Microsoft has moved in the competition for AI compute. The company's partnership with OpenAI has given it both the motivation and the technical requirements to build infrastructure that most organizations cannot match. Google, Amazon, and Meta are all expanding their own GPU fleets, but none have announced anything comparable to a single facility at this scale.

NVIDIA remains the clear beneficiary. The GB200, built on the Blackwell architecture, represents the company's most advanced chip for AI workloads. Microsoft's willingness to deploy it at this density validates NVIDIA's roadmap and demonstrates demand that ASML's production forecasts suggest will continue straining supply chains through at least 2026.

Fairwater going live ahead of schedule is a statement about execution as much as capability. Microsoft has been building toward this moment since its initial September 2025 announcement, and delivering early suggests the company's infrastructure teams have found ways to accelerate deployment that others may struggle to replicate.