Sight, sound, text, touch. Computers have conquered each of these senses in ways that felt impossible a decade ago. But smell remained stubbornly out of reach. A new benchmark from researchers aims to change that, and the results suggest AI has quietly absorbed more olfactory knowledge than anyone expected.

The Olfactory Perception Benchmark

The OP benchmark contains 1,010 questions across eight task categories. Models face challenges ranging from odor classification and intensity judgments to predicting which olfactory receptors a molecule will activate. The best-performing large language model reached 64.4% overall accuracy. That's meaningfully above chance but still well below the performance of trained human perfumers.

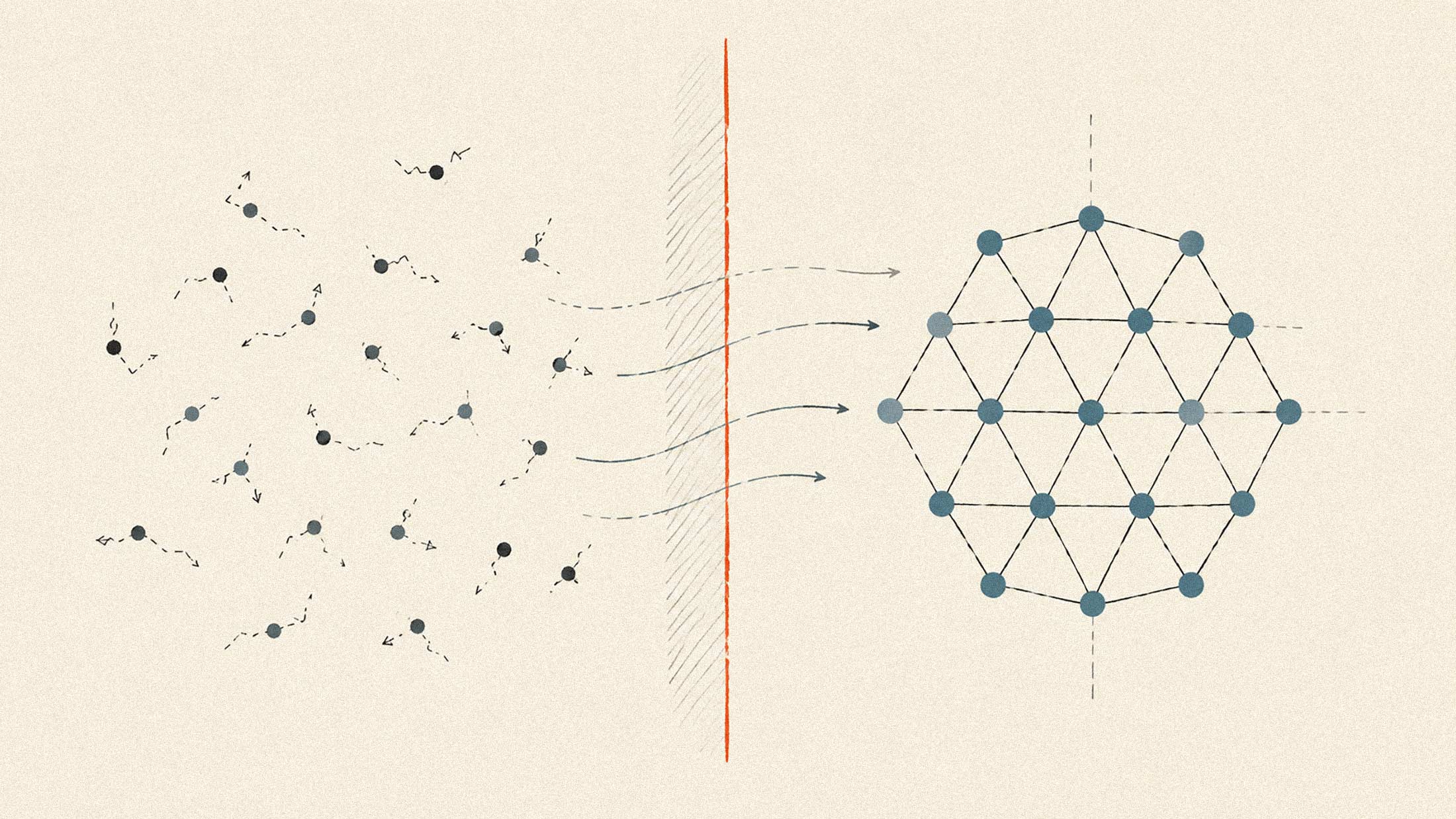

The gap matters less than the baseline. These models weren't trained on smell. They learned whatever olfactory reasoning they possess from text descriptions, chemical literature, and the accumulated knowledge embedded in their training data.

Why Smell Has Been So Hard

Vision maps neatly from wavelengths to perceived color. Sound translates frequencies into pitch. Olfaction follows no such clean logic. The relationship between a molecule's structure and the smell it produces remains incompletely understood even by scientists who've spent careers studying it.

Humans have roughly 400 types of olfactory receptors. A single molecule can activate dozens of them in different combinations. The same compound smells different at different concentrations. Mixtures don't blend predictably. Two chemicals that share almost identical structures can smell completely different, while molecules with nothing in common can smell nearly the same.

This complexity explains why "e-nose" technology has existed for decades without breaking into consumer products. The sensors work fine. The interpretation layer has been missing.

What This Could Unlock

The researchers point to immediate applications in fragrance design, flavor development, and detecting contaminants in consumer products. But the implications extend further.

Diseases leave scent signatures. Trained dogs can detect certain cancers, Parkinson's, and COVID-19 from breath or skin samples with remarkable accuracy. An AI system capable of reasoning about smell, paired with cheap molecular sensors, could bring that diagnostic capability to clinics without the dogs.

Supply chain monitoring presents another obvious use case. Food spoilage, gas leaks, chemical contamination in products. Each produces distinctive olfactory signals that humans notice too late or not at all.

The Generative Angle

The benchmark tests perception. But the same reasoning capability could run in reverse.

Molecule-design AI has advanced rapidly. AlphaFold solved protein structure prediction. Similar approaches now work for small molecules. Combine that generative chemistry with an AI system that understands which molecular features produce which smells, and you get something interesting: generative fragrance design.

Instead of a perfumer manually testing thousands of compounds, an AI could propose molecules predicted to smell a certain way, then iterate based on feedback. The same logic applies to food flavoring, where subtle adjustments to molecular structure can transform taste profiles.

The researchers suggest consumer-facing products could emerge within two to three years. That timeline assumes continued progress on both the perception and generation sides, plus hardware development for affordable sensors.

The Knowledge Source Question

One finding from the benchmark deserves attention: current models appear to draw their olfactory reasoning primarily from text rather than structural analysis of molecules. They've learned associations from descriptions in chemistry papers, fragrance databases, and general web content.

That's both encouraging and limiting. Encouraging because it shows how much latent knowledge exists in text. Limiting because reasoning from first principles about novel molecules will require models that actually understand the receptor biology, not just pattern-match against described examples.

The 64.4% accuracy ceiling likely reflects this limitation. Breaking through will require training on different data or developing new architectures that can reason about molecular interactions directly.