SpaceX's AI division has signed an agreement with Anthropic to provide access to its Colossus 1 supercomputer, the Memphis-based facility that currently ranks among the world's largest AI training systems. The deal addresses one of Anthropic's most pressing constraints: compute capacity.

According to xAI's announcement, Anthropic plans to use the additional compute to expand capacity for Claude Pro and Claude Max subscribers. The partnership also includes an expression of interest from both companies in developing "multiple gigawatts of orbital AI compute capacity."

Colossus 1 and the Compute Crunch

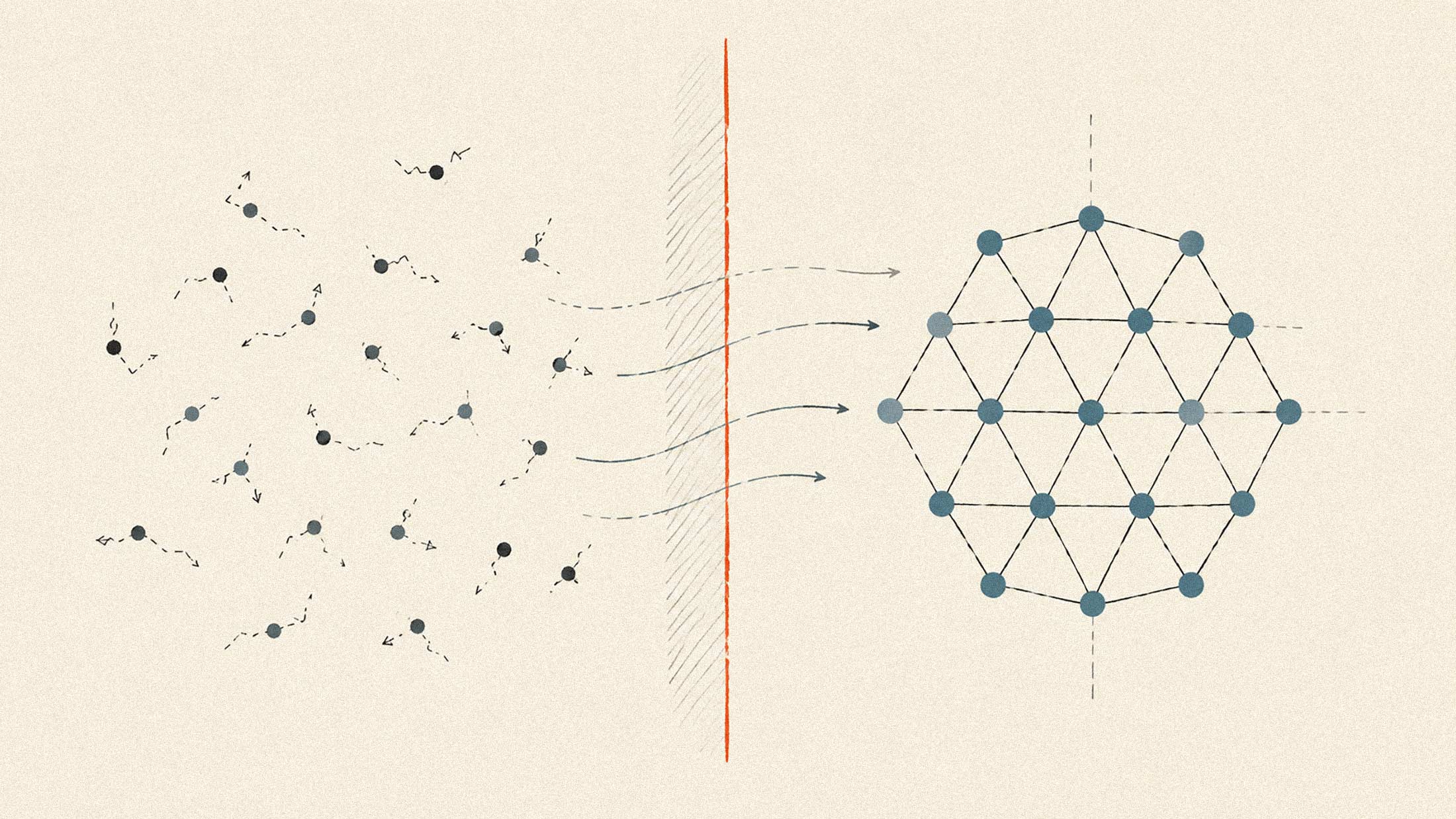

The Colossus 1 cluster features over 220,000 NVIDIA GPUs, including H100, H200, and next-generation GB200 accelerators. Built in 122 days, the facility was later doubled to 200,000 GPUs within 92 days, a pace that NVIDIA CEO Jensen Huang called "superhuman." The supercomputer delivers what xAI describes as "extreme parallel performance for large language models, multimodal systems, scientific simulations, and generative AI at frontier scale."

For Anthropic, the timing matters. The company has been navigating a well-documented compute shortage that has tightened Claude's usage limits throughout 2026. In March, Anthropic introduced peak-hour rate adjustments that caused session limits to deplete faster during weekday business hours. CEO Dario Amodei has publicly described the company as compute-constrained, a bottleneck that limits both model deployment and development velocity.

The infrastructure gap is not new. Anthropic raised billions in late 2024, but data center capacity takes 18 to 24 months to provision. Money raised then is only now beginning to translate into usable inference capacity. The xAI partnership offers a faster path to relief.

The Orbital Compute Question

Beyond the immediate compute deal, the announcement includes language about orbital AI infrastructure. Both companies expressed interest in partnering on "multiple gigawatts of orbital AI compute capacity." The announcement states that "the compute required to train and operate the next generation of these systems is outpacing what terrestrial power, land, and cooling can deliver on the timelines that matter."

SpaceX has already filed an FCC application for up to one million satellites functioning as orbital data centers. The company projects that launching one million tonnes of satellites annually could generate 100 gigawatts of AI compute capacity. SpaceX claims that orbital data centers could become more cost-effective than terrestrial facilities "within a few years."

That projection comes with caveats. SpaceX's own pre-IPO filing, reported by Reuters, acknowledged that its "initiatives to develop orbital AI compute and in-orbit, lunar, and interplanetary industrialization are in early stages, involve significant technical complexity and unproven technologies, and may not achieve commercial viability."

Google has announced plans to test orbital AI data centers, projecting that launch costs must fall to $200 per kilogram before space-based compute becomes cost-effective relative to terrestrial energy costs. Deutsche Bank estimates orbital data centers won't reach parity with ground-based facilities until well into the 2030s.

Strategic Implications

The partnership is notable for what it represents about the compute-as-competitive-moat dynamics now shaping AI development. Anthropic, which has maintained strict safety positions that recently cost it Pentagon contracts worth $200 million, is now tapping compute capacity from an entity that has signed classified AI agreements with the Department of Defense.

For SpaceX, the deal provides revenue and utilization for Colossus 1 infrastructure while xAI focuses its own capacity on training Grok models. The company is reportedly targeting a mid-2026 IPO at a valuation exceeding $1.5 trillion, with orbital compute ambitions partly underwriting that valuation.

Whether the orbital compute partnership moves beyond "expressed interest" remains to be seen. For now, the immediate effect is straightforward: Anthropic gains access to one of the world's largest GPU clusters, and Claude users may see some relief from the capacity constraints that have defined much of 2026. Details on implementation timelines and capacity allocation have not been disclosed.