A company called Subquadratic is making bold claims about its SubQ model: 12 million tokens of context processed with what it calls the first fully subquadratic sparse-attention architecture in a frontier-class LLM. If the company's benchmarks hold, this could mark a genuine inflection point in how language models handle scale.

The Quadratic Problem

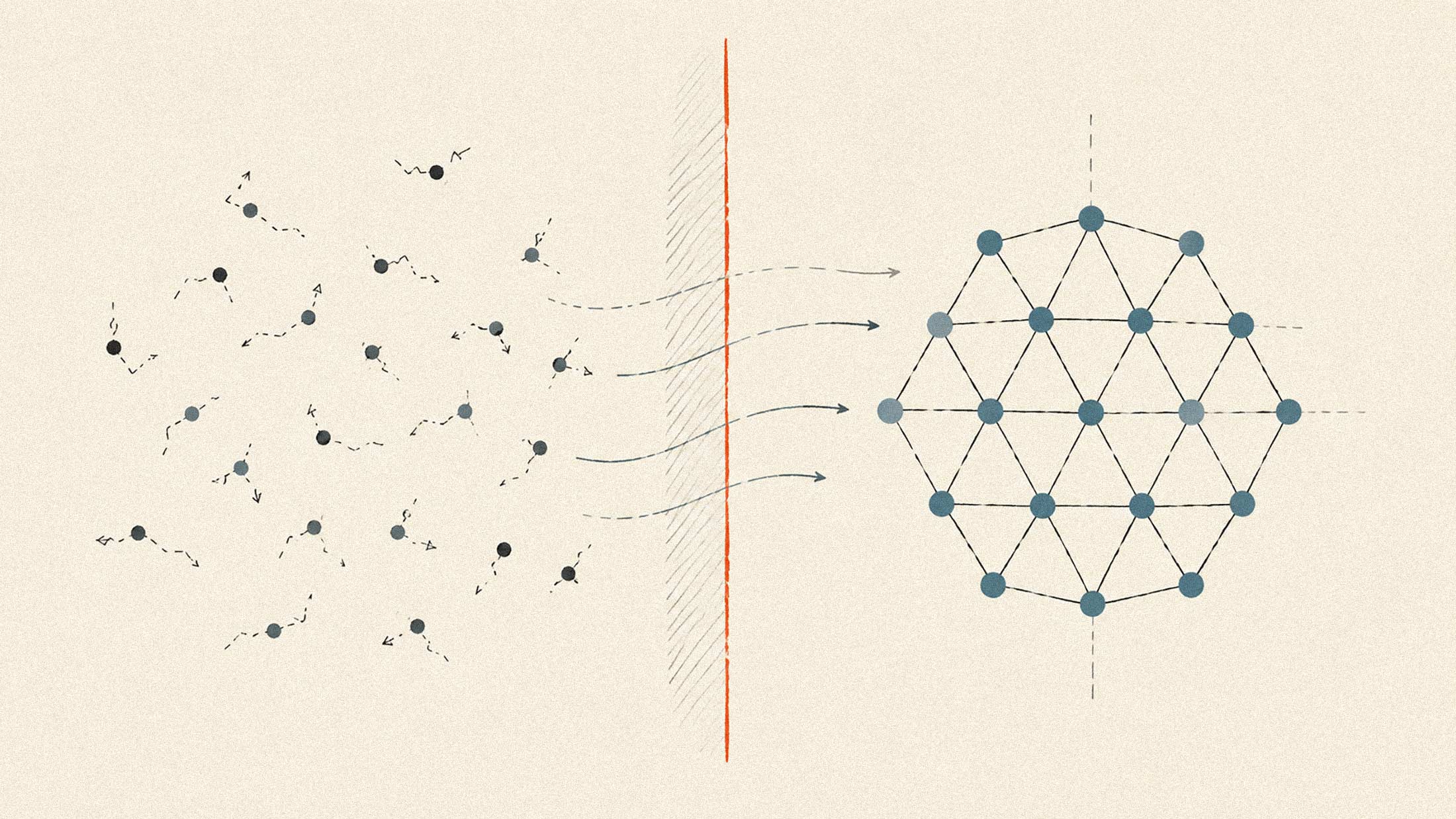

Standard transformer attention examines every possible relationship between tokens in a sequence. Double the context length and you quadruple the compute. This is why most frontier models top out at 1 to 2 million tokens, and why running them at those lengths gets expensive fast.

SubQ's approach is different. According to the company, its sparse-attention architecture identifies which token relationships actually matter and ignores the rest. The result is linear scaling: at 12 million tokens, Subquadratic claims this reduces compute by roughly 1,000x compared to standard attention.

The Numbers

The company claims SubQ achieves 92% accuracy on long-context tasks with near-zero latency. It says planning runs 5x faster than current coding IDEs, with implementation running 2-4x faster. The company positions the model as capable of holding entire codebases in memory, reasoning across all files in a single API call.

For context on cost: Claude Opus currently runs $5 per million input tokens and $25 per million output tokens. Subquadratic claims SubQ operates at a fraction of this cost, though specific pricing details have not been publicly disclosed at publication.

Skepticism Is Warranted

The AI research community has seen this movie before. Kimi Linear, DeepSeek Sparse Attention, Mamba, RWKV: all promised subquadratic scaling, and all faced the same problem. Architectures that achieve linear complexity in theory often underperform quadratic attention on downstream benchmarks at frontier scale. Or they end up hybrid, mixing subquadratic layers with standard attention and losing the pure scaling benefits.

One LessWrong analysis argued that most subquadratic attention mechanisms are better understood as constant-factor improvements rather than fundamental architecture shifts. Whether SubQ breaks this pattern remains to be seen.

What It Would Mean

If SubQ's claims hold, the implications are significant. A 12 million token context window at linear cost would enable applications that current models cannot economically support: full codebase analysis without chunking, long-horizon autonomous agents that maintain state across days of work, enterprise document processing without RAG complexity.

Subquadratic describes itself as a frontier AI research and infrastructure company working on foundational architecture changes. The company is hiring and offering early access to developers building context-heavy applications.

The model is not yet generally available. Independent benchmarks will determine whether SubQ represents a real architectural breakthrough or another incremental improvement dressed in revolutionary language.