There is a new memory module that delivers up to 30% energy savings for the workloads straining data center grids worldwide, and most people outside of HPC circles have never heard of it.

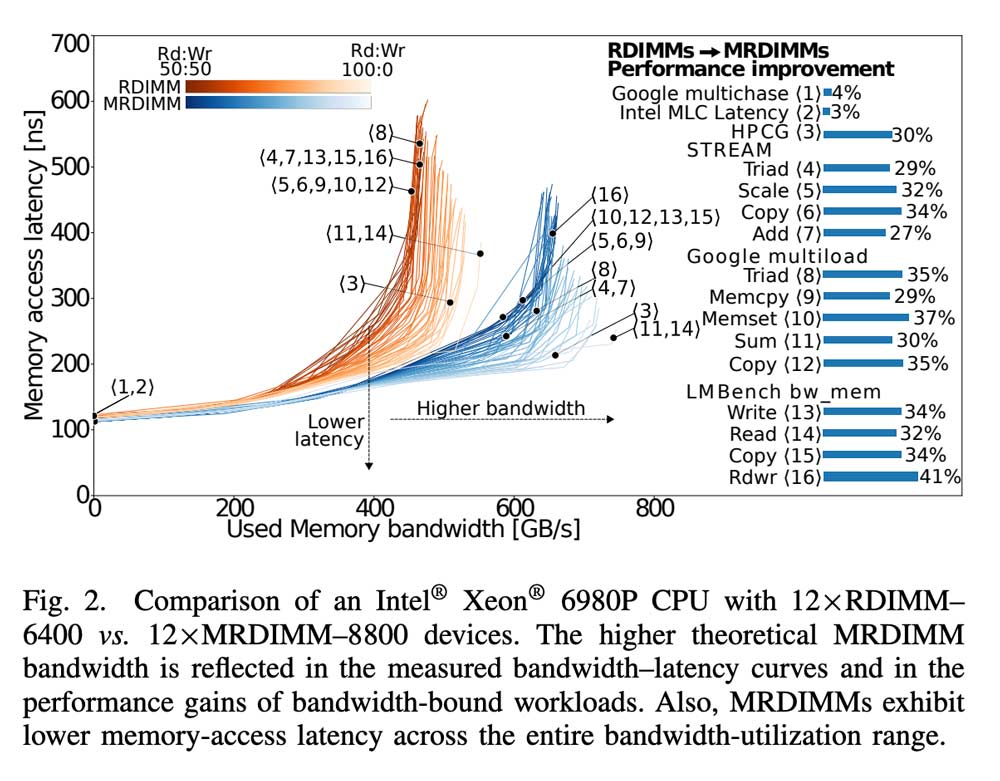

A paper published this month by researchers at the Barcelona Supercomputing Center, Micron, Intel, and the Polytechnic University of Catalonia provides the first production-server-grade evaluation of MRDIMMs. The findings: upgrading from conventional registered DIMMs to MRDIMMs extends memory bandwidth by 41%, yielding 27–41% higher performance for bandwidth-bound workloads. Latency improvements reach hundreds of nanoseconds, benefiting a broad class of workloads sensitive to memory latency.

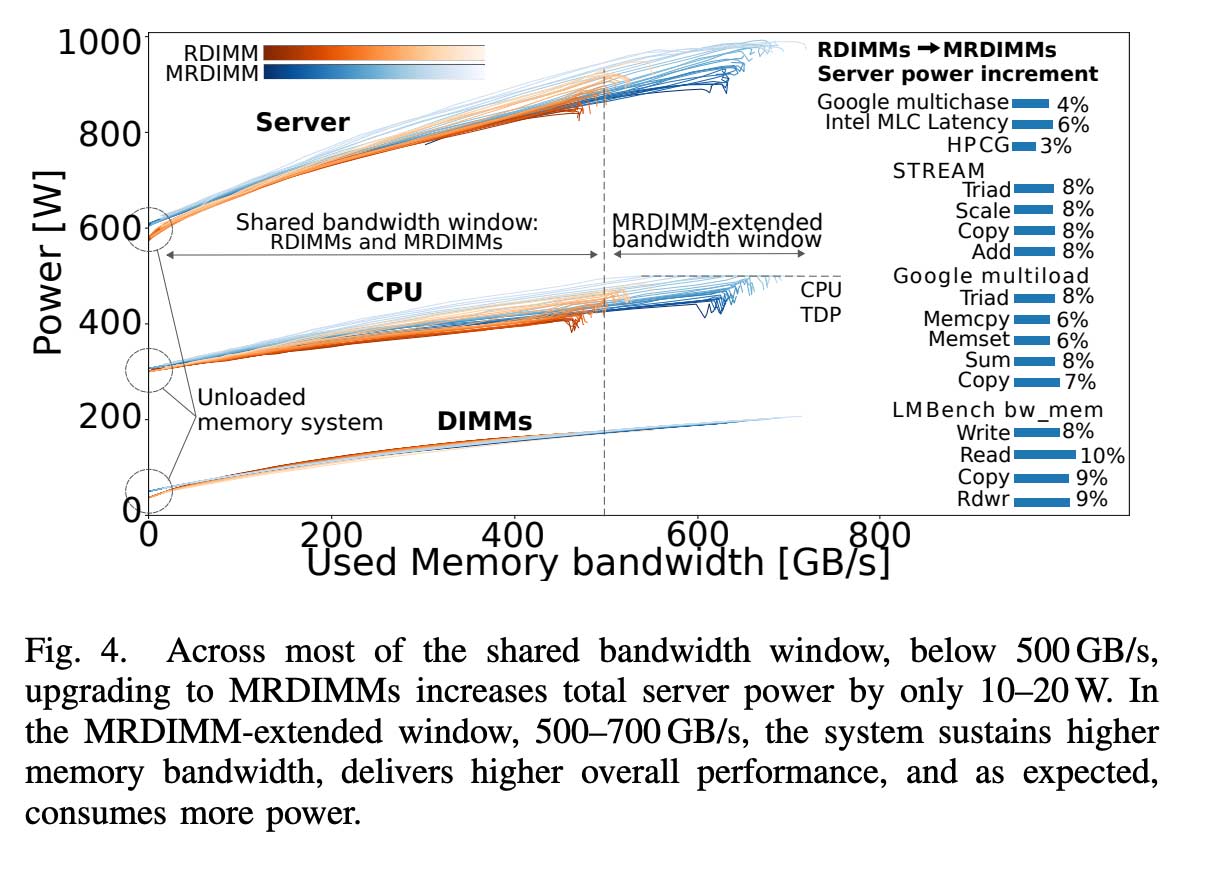

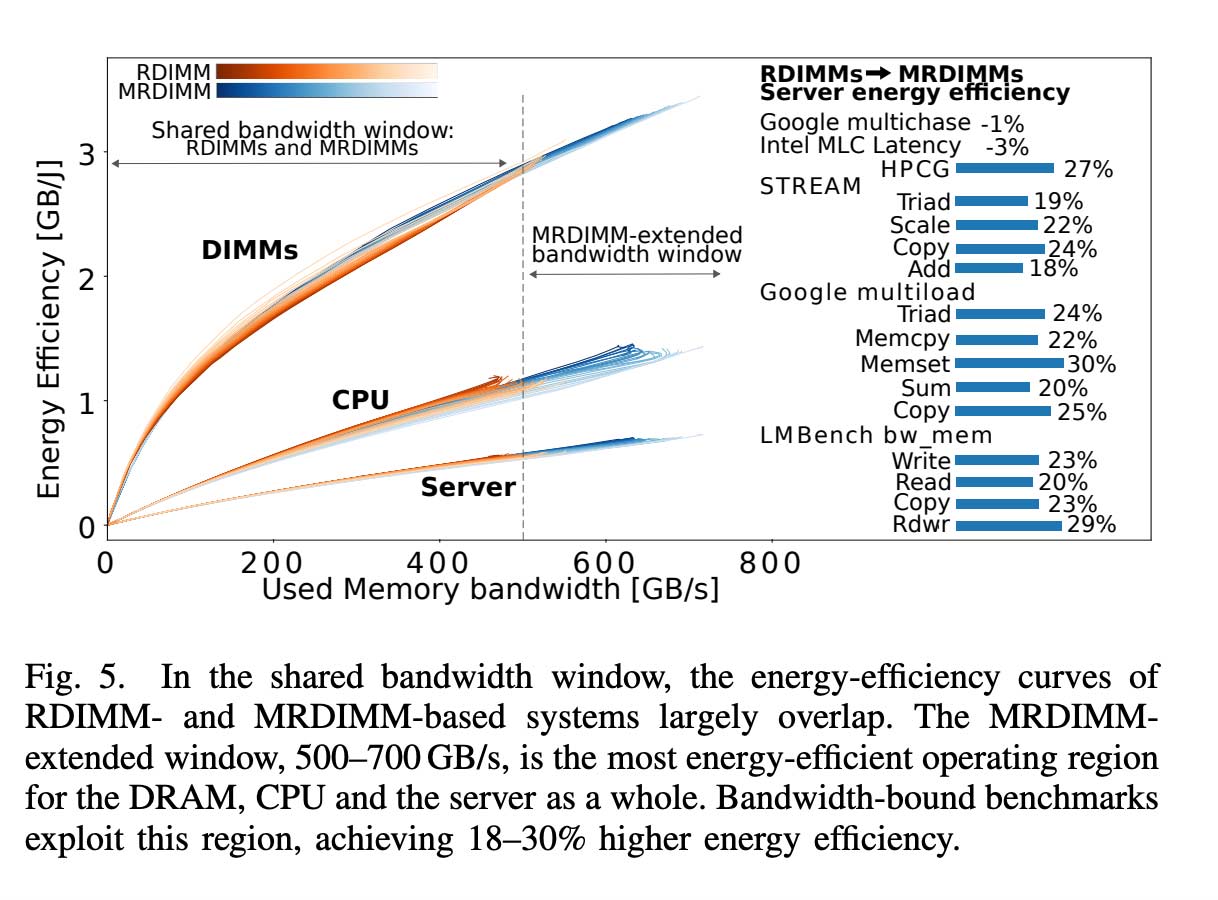

The energy implications are fascinating. At the same bandwidth utilization levels, RDIMMs and MRDIMMs exhibit similar power consumption. In the MRDIMM-extended bandwidth region, the performance improvements largely exceed the power increase, delivering up to 30% server energy savings for memory-bound workloads.

What Is an MRDIMM?

MRDIMM stands for Multiplexed Rank Dual Inline Memory Module, where dual-rank DDR5 modules are combined with a multiplexer buffer and memory controller to increase speeds beyond DDR5 specifications. By allowing the operation of two ranks simultaneously, MRDIMMs can feed 128 bytes of memory data to the CPU, twice as many as with regular DDR5 DIMMs.

This increases DRAM speed from the 4,800 MT/s of common current DDR5 implementations to 8,800 MT/s. JEDEC expects Gen1 MRDIMMs to deliver 8,800 MT/s, Gen2 to deliver 12,800 MT/s, and Gen3, likely to be released in the next decade, to deliver 17,600 MT/s.

One of MRDIMM's key advantages is that it can serve as a drop-in replacement for server memory upgrades. The server evaluated in the paper, as well as other forthcoming DDR5 CPUs and platforms, supports both RDIMM and MRDIMM. That matters for data center operators who do not want to rip out entire racks.

The Test Setup

The researchers studied a dual-socket Intel Xeon 6980P (Granite Rapids) server, with each CPU comprising 128 cores operating in Latency Optimized mode at a maximum frequency of 3.2 GHz. The CPU has 12 DDR5 memory channels populated with one dual-rank DIMM per channel. They compared DDR5 RDIMM-6400 against MRDIMM-8800.

Early studies had reported substantial increases in power consumption in servers equipped with MRDIMMs, with some observing approximately a 50% increase in both DRAM and total server power. These observations contributed to the prevailing perception that the performance gains enabled by MRDIMMs come at a significant power cost. The new research presents a more complete picture.

The key insight is distinguishing between bandwidth regions. When comparing apples to apples at the same bandwidth utilization, power draw is similar. When you push into the bandwidth range that only MRDIMMs can reach, yes, power goes up. But performance goes up faster.

Why This Matters Now

AI training and inference are increasingly bottlenecked not by compute but by memory bandwidth. Large language models spend enormous amounts of time waiting for data to move from memory to processors. Micron describes MRDIMM as the ideal memory solution for memory-intensive workloads like AI and data centers.

Meanwhile, expanding data center capacity, the rapid rise of artificial intelligence workloads, and mounting physical constraints on the power grid are converging to push electricity demand higher. The latest forecasts suggest that this trend is not temporary, but rather a defining feature of the U.S. power system in the second half of the decade.

US data centers consumed more than 4% of total electricity in 2023 and are projected to reach 9% by 2030. More than 30 utilities cited data centers as a top growth driver in their earnings reports, making AI the primary catalyst for the largest utility investment cycle in American history. A technology that delivers 30% energy savings for the most power-hungry workloads could materially change those projections.

Europe's Role

The paper's authorship tells its own story. The Barcelona Supercomputing Center is Spain's largest research center, with more than 1,400 professionals and activities closely tied to competitive European projects. MareNostrum 5, a pre-exascale EuroHPC supercomputer hosted at BSC, has a total peak computational power of 314 PFlops.

Europe is using its supercomputing centers as testbeds for what hyperscalers will deploy at scale. This is the kind of infrastructure research that shapes procurement decisions at Microsoft, Google, and Amazon. The paper's collaboration between academic researchers, Micron, and Intel suggests the memory industry is taking this seriously.

Intel's documentation notes that memory-bound workloads such as High-Performance Computing, Artificial Intelligence, and premium cloud applications benefit significantly from MCR DIMM. The technology is available first in the industry on the Granite Rapids platform.

Servers utilizing MRDIMM 12800 are expected to launch in 2026, with future MRDIMM modules leveraging still faster DRAMs and advanced signaling innovations to achieve even higher speeds and capacities.

For data center operators watching their compute requirements multiply while grid operators struggle to keep up with demand, a drop-in memory upgrade that cuts energy bills by nearly a third is not a minor optimization. It is the kind of boring infrastructure improvement that actually moves the needle on whether the AI buildout is sustainable. The hyperscalers are already paying attention to technologies like this that can help them meet surging AI demand.