A new paper from Yann LeCun's research circle has landed what may be the cleanest endorsement yet of his long-running thesis that large language models are not the path to general machine intelligence. Titled "LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels" (arXiv:2603.19312), the work was produced by researchers at Mila, NYU, Samsung SAIL, and Brown. It does not replace LLMs. It demonstrates something LLMs cannot do, and it does it on a single GPU.

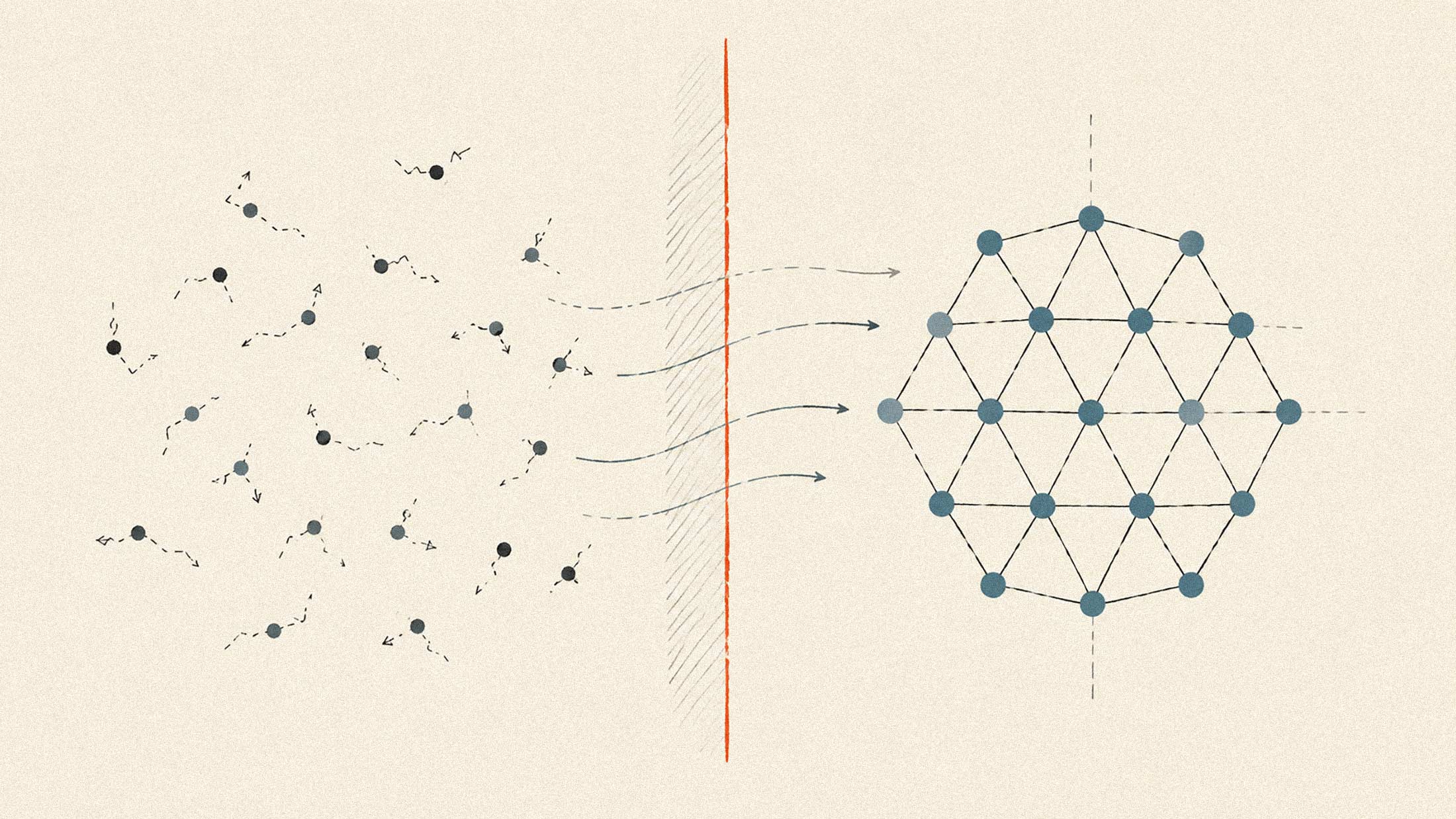

JEPA, short for Joint-Embedding Predictive Architecture, is the approach LeCun has championed as an alternative to the token-by-token generation that defines the current AI stack. The pitch has always been that real intelligence requires a compact internal model of the world that can reason about physics, predict consequences, and plan actions, not just predict the next word. The catch was that JEPA models kept collapsing during training, with the network cheating by making every internal representation look identical. The workarounds were ugly: up to six loss terms, frozen pre-trained encoders, exponential moving averages. None of it was clean.

A Two-Loss Fix

LeWM resolves this with two loss terms. The first is a simple mean-squared-error prediction of the next embedding. The second is a regularizer called SIGReg that pushes the latent space toward an isotropic Gaussian distribution using normality tests on random projections, derived from the Cramér-Wold theorem. That piece is what keeps the representation from collapsing. The whole system has essentially one meaningful hyperparameter. Training is smooth, stable, and monotonic end-to-end from raw pixels.

The scale is what should get attention. LeWM has roughly 15 million parameters. That is two orders of magnitude below what most "foundation" models ship at. It runs on a single standard GPU and converges in a few hours on offline pixel-and-action data. No reward signal. No large pre-training corpus. No fleet of H100s. A graduate student can iterate on this.

Where the Numbers Land

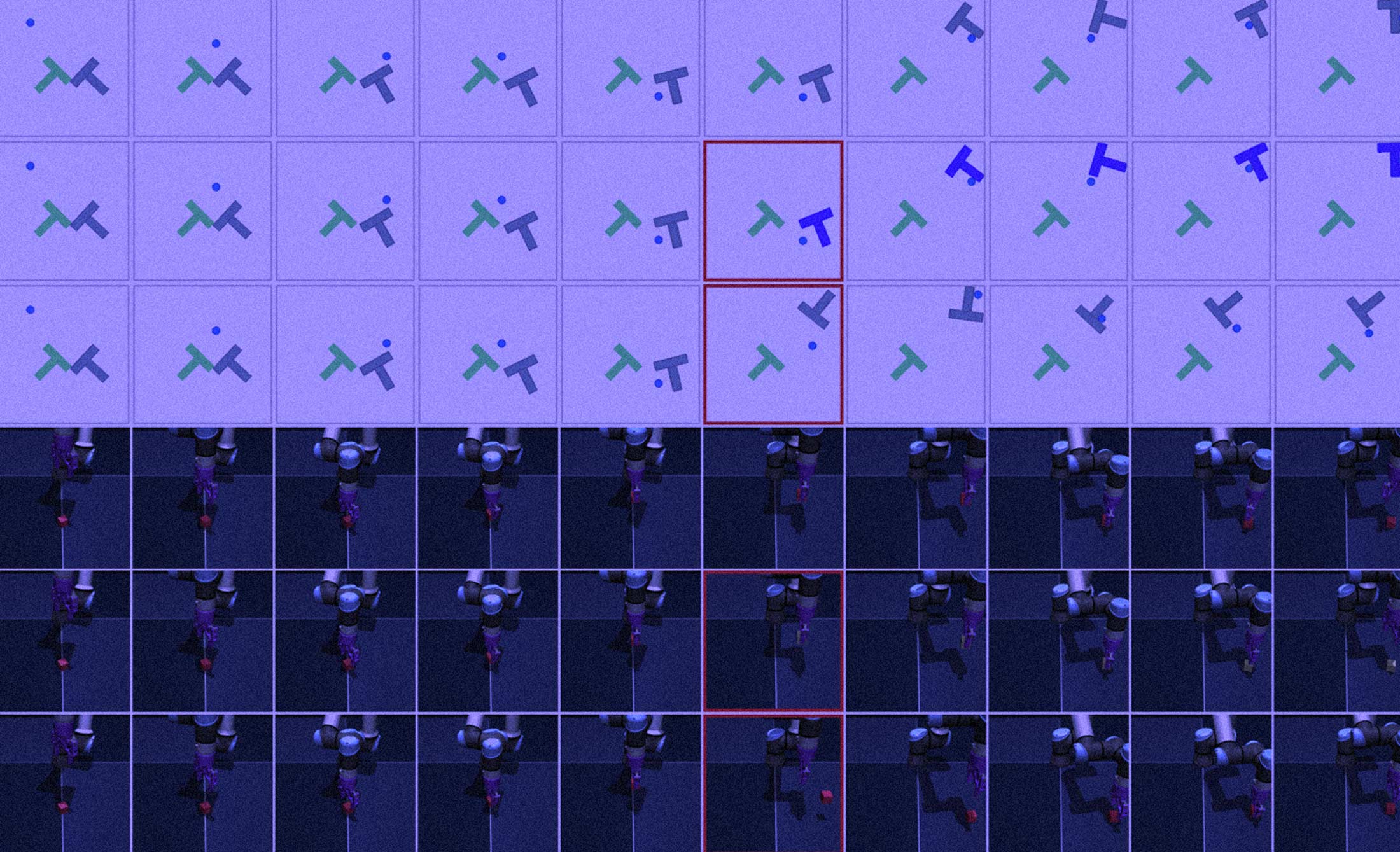

Planning in the learned latent space takes about one second per full plan, compared to roughly 47 seconds for existing foundation-model-based world models like DINO-WM. That is a 48x speedup, and it falls out of the architecture: each frame is compressed into a single 192-dimensional latent token, about 200 times fewer tokens than alternatives use. On robotics-style benchmarks including Push-T block pushing, Reacher arm control, and 3D pick-and-place, LeWM either matches or outperforms prior JEPA baselines and the heavier foundation-model world models.

The physics piece is the most interesting finding. When the authors probed the learned latent space with simple linear classifiers, it encoded real physical quantities: object positions, velocities, approximate dynamics. The model reliably flags surprise when shown physically impossible events, such as an object teleporting mid-trajectory. This is the compact, physics-aware internal representation the embodied AI community has been asking for, and it now exists in a form any reasonably funded lab can reproduce.

The Honest Limitations

Short-horizon planning only, about five steps into the future. Simulated environments only, no real robots yet. Action-labeled offline data is still required. Decoder visualizations blur fine details over long rollouts. The model underperforms slightly on the simplest task in the benchmark set, which the authors attribute to the Gaussian prior being a poor fit for very low-intrinsic-dimensionality environments.

None of that diminishes what this represents. LLMs will keep scaling and will keep being useful for the things they are good at. LeWM is the first credible seed of something parallel: a class of model that grounds actions in physical dynamics, plans rapidly in compact latent spaces, and runs on hardware that fits in a lab rather than a data center. Hybrid systems, with an LLM handling language and broad reasoning and a JEPA-style model grounding physical action, now have a practical path forward. That is the architecture LeCun has been describing for years. The first clean implementation of its hardest piece just landed, and it weighs 15 million parameters.