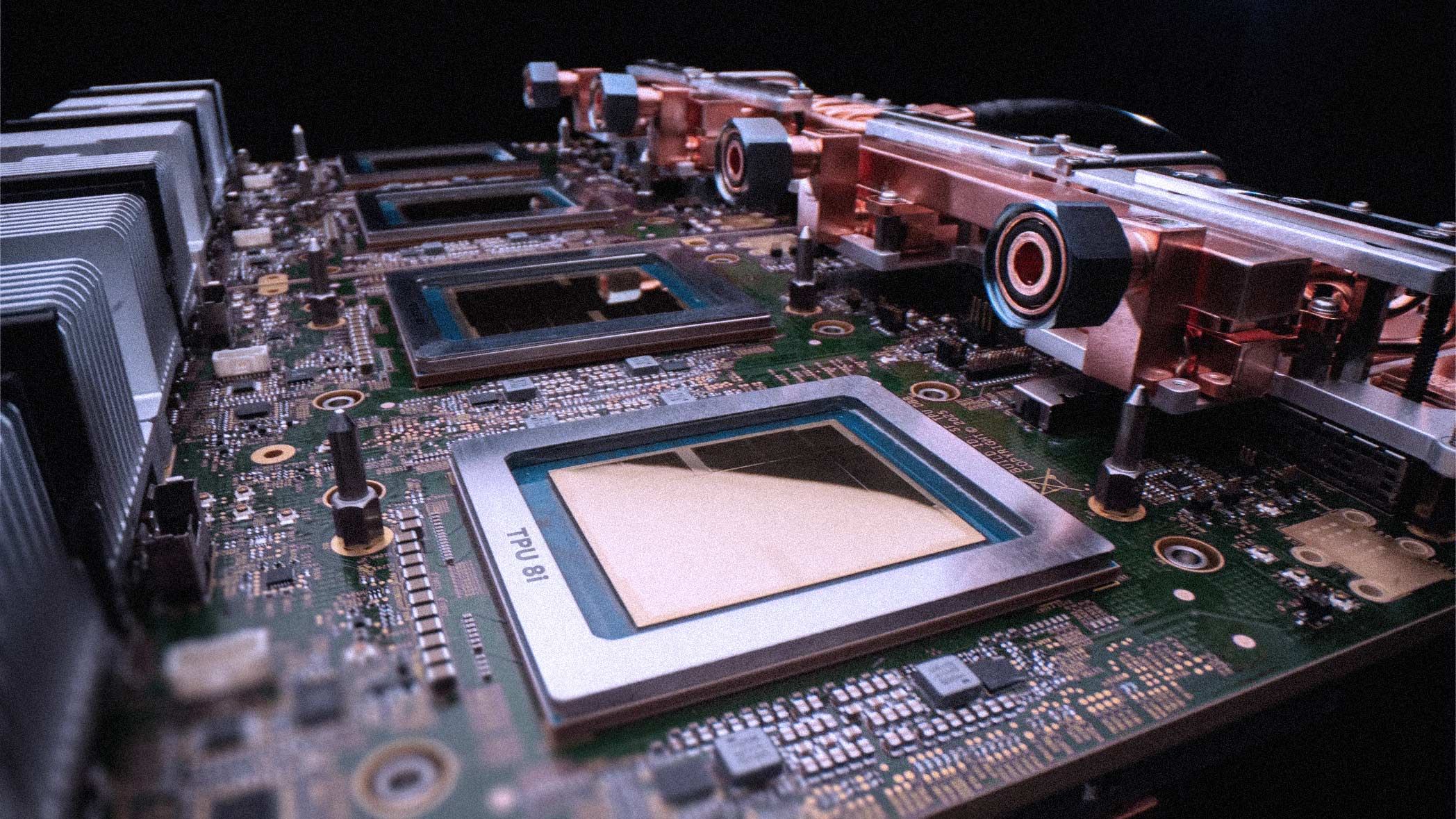

Google just made a structural bet on how AI compute will evolve. At Cloud Next 2026 in Las Vegas, the company unveiled its eighth-generation Tensor Processing Units: two separate chips, the TPU 8t for training and the TPU 8i for inference. The split marks the first time Google has released dedicated silicon for each workload, a design philosophy that mirrors what AWS has done with Trainium and Inferentia.

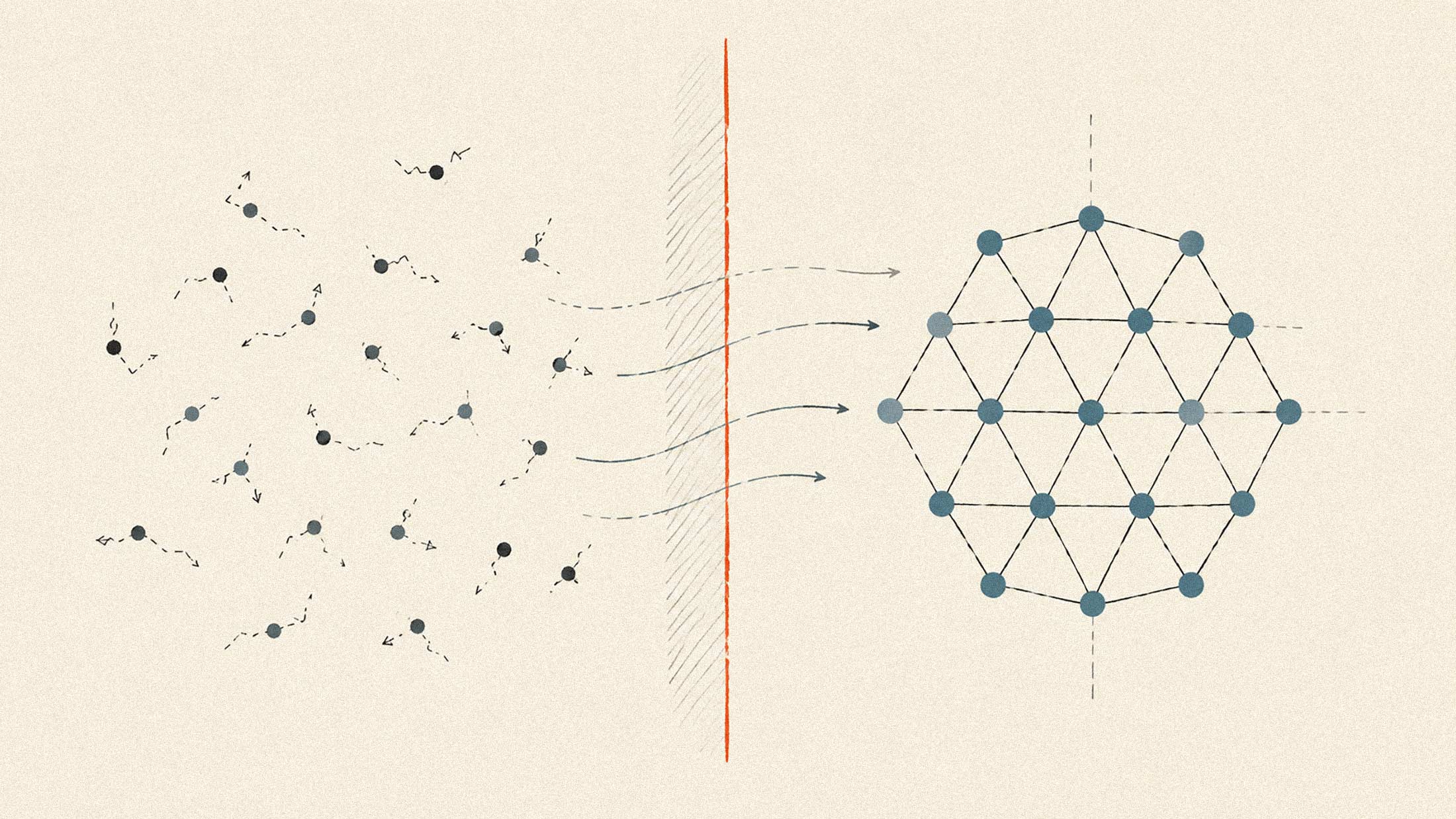

The decision stems from internal conversations with DeepMind about where bottlenecks would emerge as AI agents become standard infrastructure. According to SVP Amin Vahdat, the hardware was designed in partnership with DeepMind and reflects "purpose-built architectures" tailored to model training and agent-scale inference.

TPU 8t: Training at Scale

The TPU 8t is built for reducing frontier model development cycles from months to weeks. A single superpod scales to 9,600 chips and delivers 121 exaflops of FP4 compute, nearly three times the per-pod performance of Ironwood. Memory capacity doubles to two petabytes of HBM, with double the interchip bandwidth of its predecessor.

Google introduced a new networking layer, Virgo Network, which allows 134,000 chips to operate as a single fabric within one data center and supports scaling beyond a million chips across multiple facilities. Combined with JAX and Pathways, the system achieves near-linear scaling, a claim backed by up to 47 petabits per second of non-blocking bi-sectional bandwidth. The architecture targets more than 97 percent "goodput," Google's term for the ratio of productive compute time to total uptime.

New features include TPUDirect RDMA, which bypasses host CPUs to enable direct data transfers between memory and NICs, and TPU Direct Storage for direct memory access between the chip and managed storage.

TPU 8i: Built for the Age of Agents

The inference-focused TPU 8i reflects where most enterprise compute demand actually sits. It scales to 1,152 chips per pod and delivers 11.6 exaflops of FP8 compute with 331.8TB of total HBM capacity per pod. Google claims 80 percent better performance-per-dollar compared to Ironwood, which it says translates to nearly double the customer volume served at the same cost.

Memory constraints have been a persistent problem for inference, particularly when running large language models with massive key-value caches. The 8i addresses this by pairing 288GB of HBM with 384MB of on-chip SRAM, triple the SRAM of Ironwood. Google also introduced a Collectives Acceleration Engine that reduces on-chip latency by up to 5x for chain-of-thought and autoregressive decoding.

A new topology called Boardfly replaces the 3D torus used in training chips. By increasing ports and reducing network diameter by 50 percent, Boardfly delivers a 50 percent latency improvement over previous architectures.

Vertical Integration as Strategy

Both chips run on Google's Axion Arm-based CPUs and use fourth-generation liquid cooling. Google claims double the performance per watt compared to Ironwood, enabled in part by real-time power management that adjusts draw based on demand.

The company positioned the release as evidence that owning the full stack, from silicon to models, offers efficiency gains impossible with independently designed components. It is a direct challenge to customers running on Nvidia hardware, though Google itself remains a major Nvidia customer.

Anthropic has committed to using multiple gigawatts of Google TPU capacity. Citadel Securities and all 17 U.S. Department of Energy national laboratories are also running workloads on Google's custom silicon. Both the 8t and 8i will be generally available later this year as part of Google's AI Hypercomputer architecture.