Multipath Reliable Connection, a networking protocol designed to solve the fundamental bandwidth and reliability problems that plague AI training clusters, is going open. OpenAI has contributed MRC to the Open Compute Project, and the industry's major silicon vendors are lining up to implement it.

The contribution marks a significant shift in how AI infrastructure companies approach network standardization. Rather than each hyperscaler developing proprietary solutions to the same underlying problems, MRC provides a common specification that network equipment vendors can implement consistently.

What MRC Actually Does

Traditional Ethernet relies on Equal-Cost Multipath routing, which uses hash-based load balancing to distribute traffic. The approach works reasonably well for web traffic and streaming video, where flows are numerous and randomly distributed. AI training generates a fundamentally different traffic pattern.

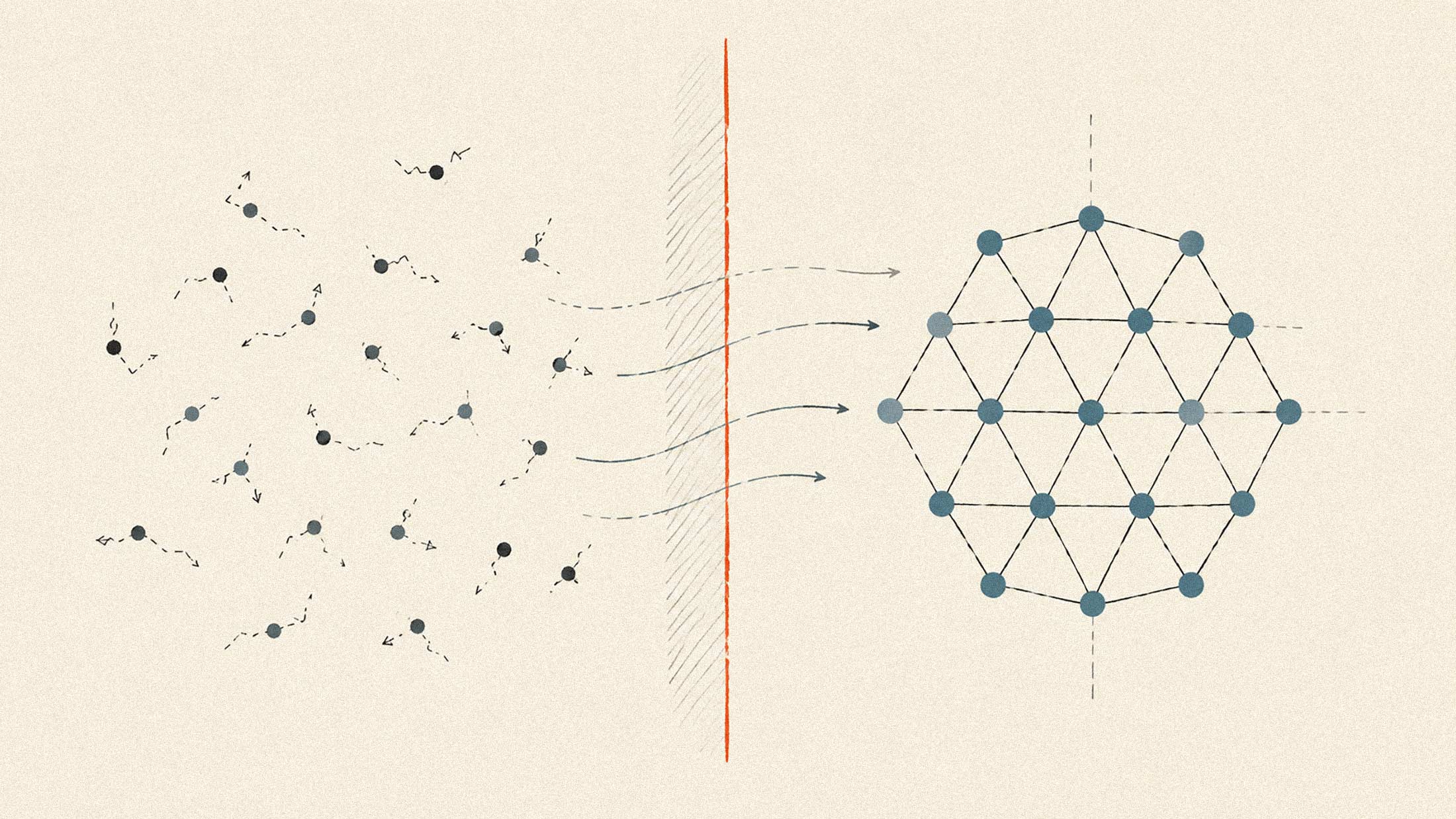

In large-scale model training, GPUs synchronize across collective operations like all-reduce and all-to-all. Each NIC establishes relatively few connections, but those connections carry massive sustained flows that can saturate link capacity. ECMP's hash-based distribution struggles here because low entropy in the flow characteristics leads to uneven link utilization. Some paths get overloaded while others sit idle.

MRC addresses this by distributing packets across multiple paths simultaneously rather than pinning flows to single routes. When congestion develops on one path, traffic automatically shifts to alternatives. When failures occur, the protocol adapts without the recovery delays associated with traditional mechanisms.

According to AMD, which played a formative role in developing the specification, MRC helps make AI training clusters more resilient under real-world conditions. The company describes the protocol as turning the network into a "shock absorber" that allows workloads to continue making progress when hardware failures or transient congestion would otherwise disrupt synchronization.

Production Validation

This specification emerged from actual deployment rather than theoretical design. AMD has implemented and deployed MRC combined with its networking technology at scale in test clusters with a leading cloud provider. The Stargate data center, jointly developed by Oracle and OpenAI, reportedly uses NVIDIA Spectrum networking with OCP technologies to achieve 95% effective bandwidth.

For comparison, off-the-shelf Ethernet at similar scale typically delivers around 60% throughput due to flow collisions. That performance gap directly impacts how much useful accelerator capacity remains productive during training jobs.

Industry Alignment

The Open Compute Project now hosts a Multipath Reliable Connection workstream where companies can collaborate on the specification. NVIDIA's Spectrum-X Ethernet platform already implements related capabilities through its adaptive routing technology, which sprays packets across multiple paths and handles reordering at the destination. The company has contributed foundational elements of its Blackwell platform design to OCP and broadened Spectrum-X support for OCP standards including SAI and SONiC.

AMD positions its Pensando Pollara 400 NIC and upcoming Vulcano 800G NIC as MRC-capable, emphasizing the programmability that allowed the company to validate pre-standard implementations. Microsoft contributed alongside OpenAI and AMD.

The timing aligns with broader changes in how AI infrastructure companies think about networking. Data center power consumption is becoming the primary constraint on cluster size, and network efficiency directly impacts how much useful compute customers extract from their power budget.

What Happens Next

OCP typically moves specifications through defined stages, from incubation through formal approval. Google has described the organization's pace as targeting "specs at product speed," reaching alignment on shared requirements within three to nine months.

For enterprises building AI clusters, MRC support in commodity network equipment would reduce dependence on any single vendor's proprietary stack. That matters as organizations navigate increasingly complex infrastructure decisions and weigh InfiniBand against Ethernet for backend fabrics.

The practical question is whether MRC adoption will proceed quickly enough to matter for current deployment cycles, or whether it becomes relevant for the generation of hardware after Blackwell and whatever AMD ships next. Network protocols take time to mature, and hyperscalers tend to validate extensively before deploying at scale.