Most enterprise AI deployments share a common failure mode: they connect large language models to data, generate plausible outputs, and then watch those outputs die somewhere in a Slack channel. The models can retrieve. They can summarize. But they cannot act, because acting requires more than information. It requires context about how decisions get made, what constraints govern them, and where those decisions land once they're executed.

Palantir has spent years arguing that this gap is architectural. Its solution, the Palantir Ontology, is now the central nervous system behind its Artificial Intelligence Platform (AIP), the product driving explosive commercial growth and reshaping how enterprises think about operational AI.

Why Traditional Data Architectures Fall Short

The conventional wisdom in enterprise data has been to centralize, clean, and warehouse. Build a data lake. Govern it. Let analysts query it. This works for reporting. It fails for decision-making.

Traditional data architectures capture facts but not the reasoning that connects those facts to outcomes. They don't model the logic that determines when and how a given decision should be made, or the actions that result from committing to a course of action. And they certainly don't capture the lineage of decisions over time in a way that allows AI systems to learn from what worked.

The Ontology takes a fundamentally different approach. Instead of flattening operational complexity into schemas optimized for analytics, it preserves the full structure of how an enterprise operates. Objects, properties, and relationships evolve in real-time. Actions are first-class citizens. Security isn't bolted on but woven into every layer.

The Four Pillars of Decision-Making

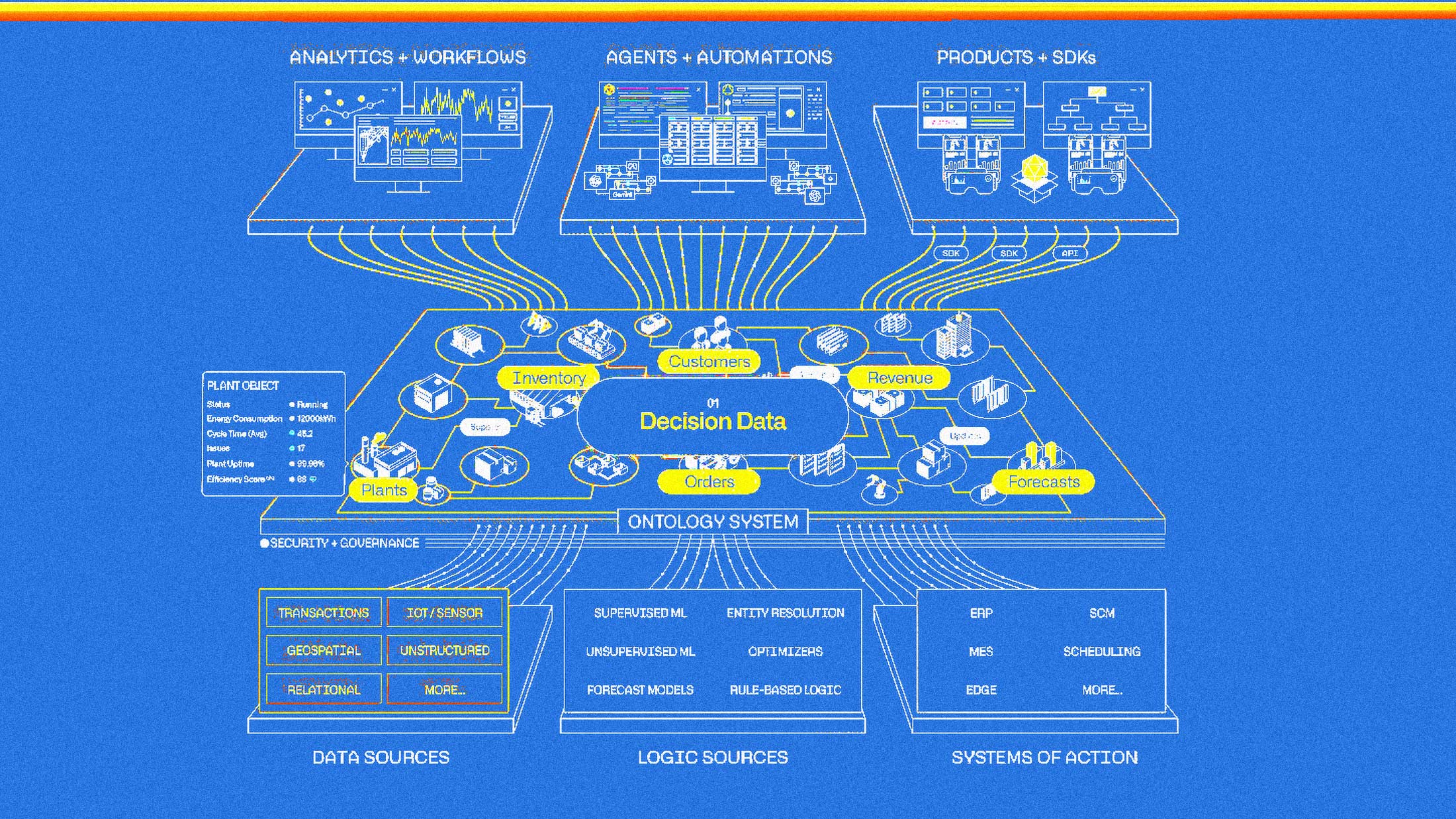

Palantir's framework decomposes every operational decision into four constituent elements: data, logic, action, and security. Each matters, and their integration is the point.

Data in the Ontology isn't limited to structured databases. It includes streaming feeds from IoT sensors, unstructured document repositories, imagery, and critically, the decision data generated by users and agents as they navigate workflows. This decision data captures context: what options were evaluated, what information was consulted, what choice was ultimately made. That context becomes fuel for continuous improvement.

Logic encompasses every algorithm, model, and business rule that informs how decisions get evaluated. This might be a forecast model maintained in a cloud data science environment, an optimization algorithm embedded in an ERP, or a piece of tribal knowledge that only exists in the heads of experienced operators. The Ontology surfaces all of these as tools that both humans and AI agents can invoke.

Action is where the Ontology diverges most sharply from analytics platforms. Closing the loop between insight and execution is what makes a system operational rather than merely informational. The Ontology models actions as first-class primitives, with the same rigorous access controls and audit trails that govern data access. Human and agent actions can be staged as scenarios, reviewed before commitment, and synchronized back to operational systems with full decision lineage captured.

Security governs the entire structure. Role-based, marking-based, and purpose-based policies are dynamically computed at runtime for every interaction. This granularity matters enormously when AI agents are involved, because agents need precisely scoped access. They need to be constrained to the tools and data they're authorized to use, with explicit controls over what actions they can stage versus execute autonomously.

How Agents Actually Work Within the Ontology

The AI agent ecosystem has generated enormous hype and limited production impact. Most enterprise agent deployments amount to glorified chatbots with retrieval-augmented generation bolted on. They can answer questions. They cannot execute decisions.

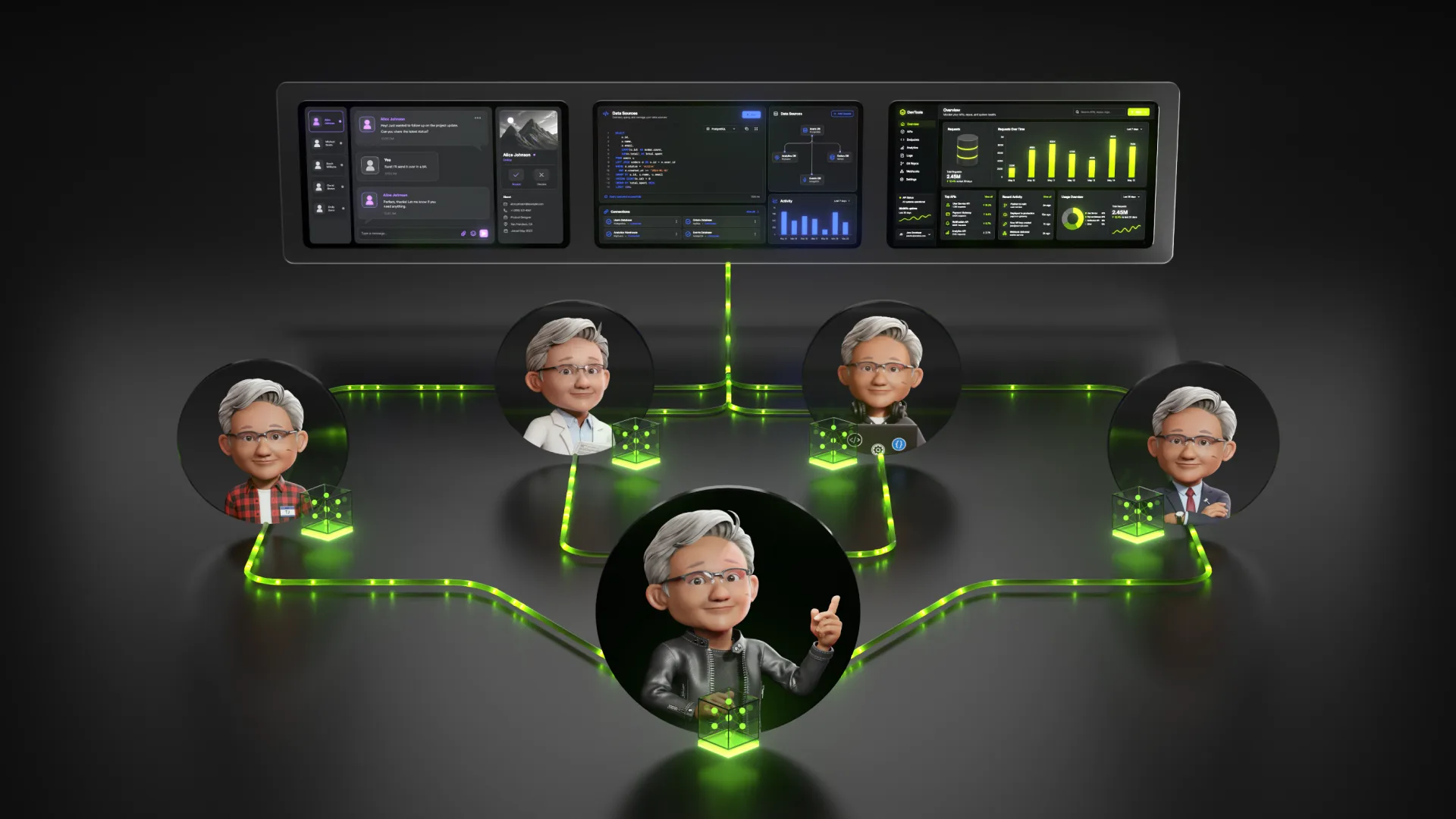

Palantir's approach is more ambitious. Within AIP, agents don't just query data. They navigate the full Ontology, accessing the same objects, logic assets, and action primitives that human operators use. The Ontology functions as the shared operational foundation for human-agent teaming.

Consider a supply chain disruption at a manufacturing company. A traditional analytics system might surface the problem: a supplier is delayed, and certain production lines are at risk. An Ontology-powered agent can do more. It can traverse connections to identify every downstream customer order affected. It can invoke optimization models to evaluate material reallocation strategies. It can stage proposed actions in a scenario sandbox, allowing human analysts to review the consequences before committing. And when the decision is made, the Ontology synchronizes the changes back to warehouse management systems, ERPs, and production schedulers automatically.

This is what Palantir means by connecting agents to decisions. The agent isn't just generating text. It's reasoning over the same semantic representation of the enterprise that human operators use, with access to the same tools and subject to the same governance controls.

Real-World Deployments

Palantir's customer base spans government and commercial sectors, and the use cases demonstrate what becomes possible when AI is anchored in operational context.

At Novartis, the pharmaceutical company has built Data42 on Palantir Foundry, integrating 700 million patient lives from real-world data alongside information from 3,000 clinical trials encompassing about a million patients. The platform has reportedly reduced the time translational modelers spend on human dose prediction from one week to roughly two hours per compound. In an industry where drug development takes an average of 12 years and $3 billion, with a one-in-10,000 success rate from early synthesis to regulatory approval, even marginal improvements in research velocity matter enormously.

American Airlines uses its ontology for AI-enabled network planning, synthesizing operational data across a complex aviation system. Lumen, the telecommunications company, has deployed AIP to manage network operations, with executives reporting tens of millions of dollars in value unlocked within a single year. Healthcare organizations like MaineHealth and Tampa General Hospital are using the platform to handle insurance denials and optimize patient care pathways.

The pattern across these deployments is consistent. Organizations aren't just using AI to analyze historical data. They're using it to participate in operational workflows, proposing actions, surfacing recommendations, and in some cases executing decisions autonomously within defined boundaries.

AIP Bootcamps and the Go-to-Market Evolution

Palantir's sales model has evolved alongside its technology. The company pivoted from traditional enterprise sales cycles to intensive five-day workshops called AIP Bootcamps, where prospective customers build functional agents using their own proprietary data. The conversion rate is reportedly around 75 percent, compressing sales cycles that once took months into days.

This approach reflects confidence in the platform's ability to demonstrate value quickly. When customers can stand up working use cases in a week, the traditional objections about implementation complexity and time-to-value become less relevant. And once an organization's ontology is built, switching costs are substantial. The semantic model of the enterprise becomes embedded in how decisions flow.

The Strategic Positioning

Palantir is positioning itself as an enterprise decision layer that sits above design tools, ERPs, scheduling systems, supply chains, and sensor networks. It's not competing to be the system of record for any single functional domain. It's competing to be the orchestration layer that connects them all.

The partnership with NVIDIA announced last year extends this positioning into the infrastructure layer, integrating GPU-accelerated data processing and optimization libraries with the Ontology. Lowe's is among the first to use the combined stack, creating a digital replica of its global supply chain for continuous AI optimization.

The agentic AI wave benefits companies that have solved the orchestration problem. Most enterprises are still asking how to connect models to data. Palantir is already asking how to connect agents to decisions. The difference is substantial, and it may explain why investors continue paying premium multiples despite analyst skepticism about valuation.

What Comes Next

Palantir recently introduced Ontology MCP, which exposes Developer Console applications to AI agents through the Model Context Protocol, an open standard for connecting agents to data sources. This means external agent frameworks like LangChain, CrewAI, or custom Python implementations can now interface with the Ontology without building custom integrations from scratch.

The implications are significant. Palantir is opening its orchestration layer to the broader ecosystem while maintaining control over the semantic model and governance architecture. Developers can bring their own agent frameworks. But they're still operating within Palantir's decision-centric architecture, subject to its security controls and contributing to its understanding of enterprise operations.

The question for enterprise buyers isn't whether the Ontology delivers value. The evidence suggests it does. The question is whether the gains in speed and operational intelligence justify long-term dependence on an external platform that encodes how the business thinks and operates. That's a strategic calculation each organization will need to make for itself.