OpenAI and five of the largest names in semiconductor and cloud infrastructure have released a new networking protocol designed to keep massive AI training clusters running smoothly. Multipath Reliable Connection, or MRC, is now available through the Open Compute Project under an open license, marking a rare moment of industry coordination on a problem that has quietly become one of AI's biggest infrastructure headaches.

The partners behind the effort include AMD, Broadcom, Intel, Microsoft, and NVIDIA. The protocol has been in development for roughly two years and is already running in production across OpenAI's largest training environments, including its NVIDIA GB200 supercomputers at the Oracle Cloud Infrastructure site in Abilene, Texas, and Microsoft's Fairwater systems.

The Problem MRC Solves

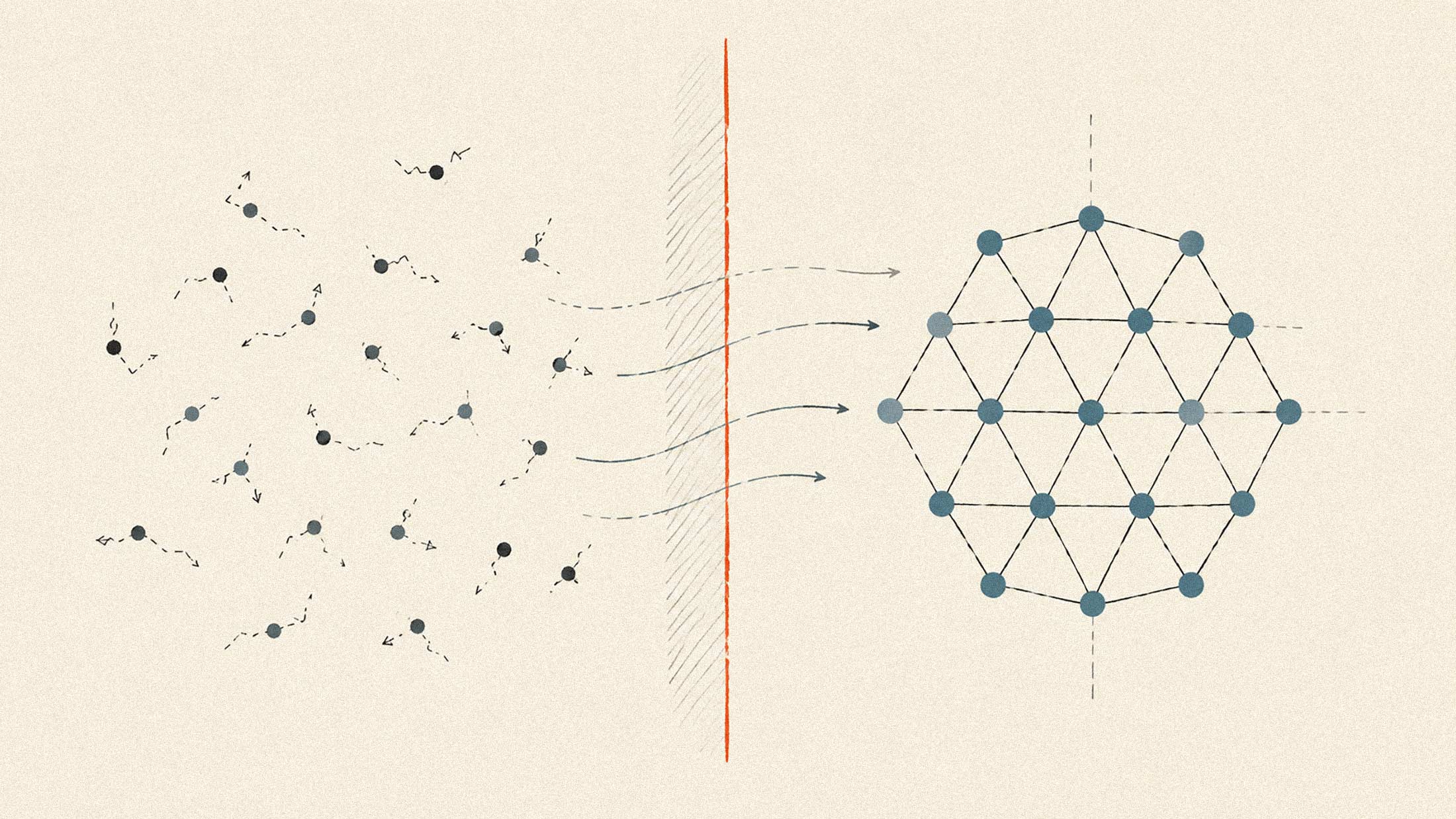

Frontier model training depends on keeping thousands of GPUs synchronized. When one transfer stalls, everything waits. Traditional networking protocols route each data flow along a single path, which creates two problems at scale: flows collide and congest shared links, and each transfer can only use one of potentially hundreds of available network paths.

MRC addresses this by spreading individual transfers across many paths simultaneously. The protocol uses a technique called packet spraying, scattering data across hundreds of routes to prevent any single link from becoming a bottleneck. When failures occur, MRC detects them and reroutes traffic in microseconds. Conventional network fabrics can take seconds, or even tens of seconds, to stabilize after a failure.

The protocol extends RDMA over Converged Ethernet and incorporates SRv6 source routing, which pre-determines packet paths rather than relying on switches to compute routing dynamically. This simplification reduces switch complexity, lowers power consumption, and cuts the operational burden of running routing protocols like BGP across massive clusters.

Scaling Past 100,000 GPUs

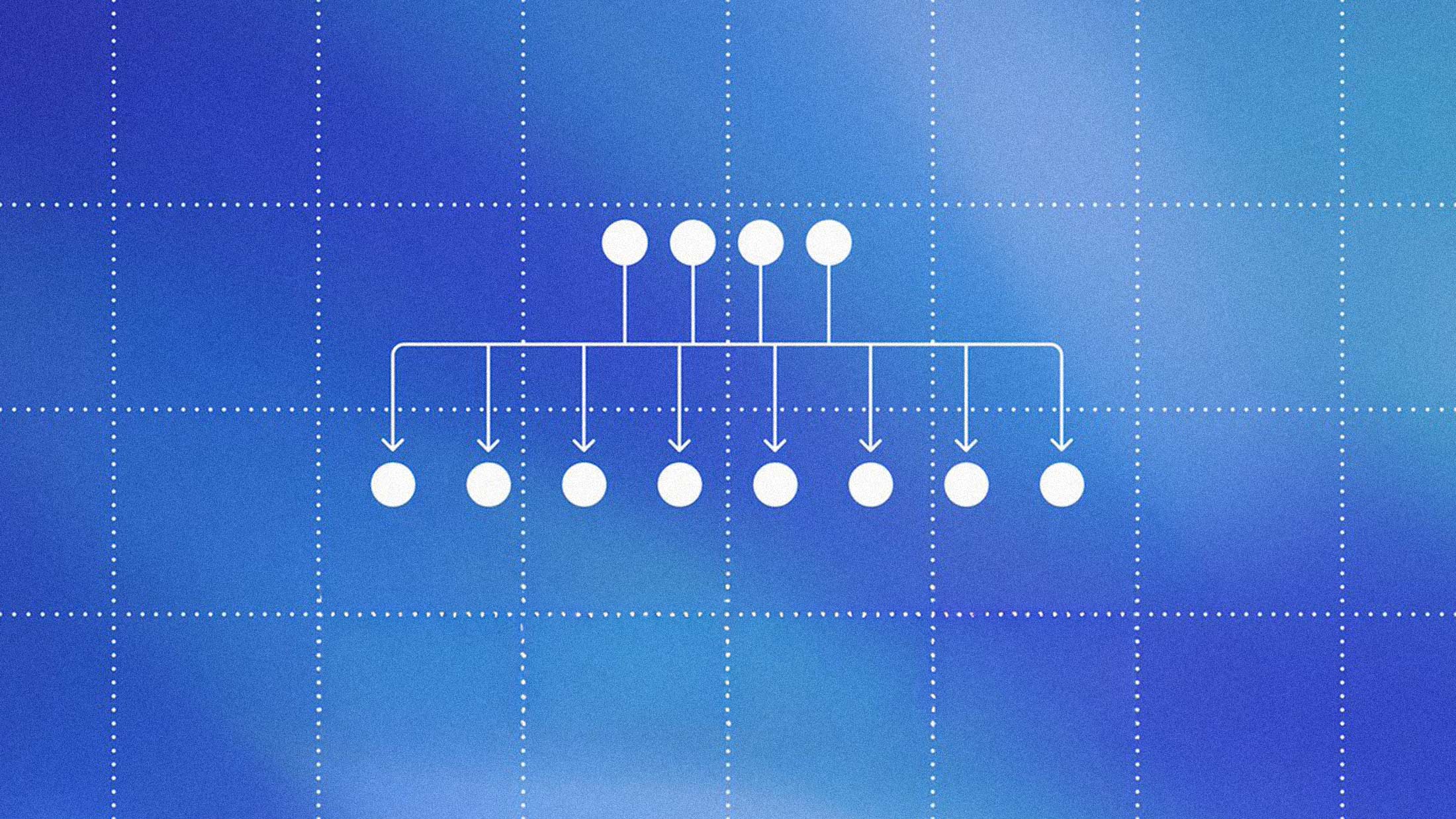

MRC enables multi-plane network topologies where over 100,000 GPUs can be connected using only two tiers of switches. Traditional approaches would require four tiers at that scale, adding cost, power draw, and failure points.

According to OpenAI, the design reduces total network power consumption and component count compared to conventional architectures. Load balancing happens automatically across all available paths, and selective retransmission means only lost packets are resent rather than entire sequences.

"As GPUs and CPUs continue to drive compute, the real bottleneck in scaling AI is the network," said Krishna Doddapaneni, corporate vice president of engineering at AMD's Network Technology Solutions Group. AMD contributed pre-standard implementations that informed the final specification, enabled by the programmability of its Pensando Pollara 400 AI NIC.

Why Open, Why Now

The decision to release MRC through OCP rather than keep it proprietary reflects a practical calculation. OpenAI's workload lead, Greg Steinbrecher, told The Deep View that the company is not trying to differentiate on networking infrastructure. "Several players in the industry have their own in-house implementations of protocols," he said. "That type of market fragmentation is bad for the networking industry."

The bet is that a shared standard benefits everyone, including OpenAI, by deepening the available pool of compatible hardware and accelerating the pace of infrastructure improvement across the industry. Microsoft Azure, Oracle Cloud Infrastructure, NVIDIA, and Arista all participated in deploying MRC at scale.

NVIDIA frames MRC as complementary to its Spectrum-X Ethernet platform, which runs both MRC and NVIDIA's own Adaptive RDMA protocol natively across ConnectX SuperNICs. Sachin Katti, OpenAI's head of industrial compute, credited the collaboration with NVIDIA for avoiding "much of the typical network-related slowdowns and interruptions" during Blackwell-generation deployments.

What This Means for Infrastructure

The release lands as hyperscalers and AI labs race to build ever-larger clusters. Networking has emerged as a central constraint, with small disruptions cascading into major compute inefficiencies when thousands of GPUs must stay synchronized.

MRC's contribution through OCP sets a precedent. Protocols like this could push the industry toward more standardized, interoperable AI networking fabrics, reducing reliance on proprietary stacks. Whether competitors adopt it or continue building internal solutions will determine how much MRC actually unifies the space.

The protocol is now available for any organization to implement. OpenAI says it has already been used to train multiple frontier models, and the Stargate project, its long-term compute buildout, will rely on MRC as a core infrastructure layer. For companies building next-generation AI data centers, the specification offers a starting point that comes pre-validated at scale.