The technology industry has spent the last two years discovering that its future runs on a single, interconnected system. Energy, chips, memory, and compute are no longer separate markets. They are layers of one dependency stack, and a bottleneck in any layer cascades everywhere.

The Dependency Stack

Start at the bottom. According to the International Energy Agency, global electricity consumption for data centers is projected to double to reach around 945 TWh by 2030, representing just under 3% of total global electricity consumption. In the United States, data centers could consume up to 12 percent of total U.S. electricity by 2028, according to the Lawrence Berkeley National Laboratory. Energy is no longer a utility cost. It is compute's input material.

Move up one layer. Compute requires processing hardware, and processing hardware requires advanced chips. But chips themselves require memory. The shortage is not primarily about GPU die production. It is about the memory and packaging that surrounds the die. The current compute crunch is a product of explosive demand from AI workloads, limited supplies of high-bandwidth memory, and tight advanced packaging capacity. Lead times for data-center GPUs now run from 36 to 52 weeks.

With data centers now consuming an estimated 70% of all memory chips produced worldwide, consumers are bearing the brunt of a structural shift. Bloomberg reports that data center demand for DRAM surged to around 50% of global consumption in 2025, up sharply from 32% five years earlier.

The Memory Wall

The memory wall isn't just an engineering footnote in AI's rise. It's the defining infrastructure challenge of this era. High-bandwidth memory has become the critical chokepoint. NVIDIA's B300 GPU requires eight HBM chips, each containing 12 individual DRAM dies. That means a single B300 GPU consumes 96 DRAM dies, and a fully configured DGX B300 system with eight GPUs requires 768 DRAM dies just for the HBM modules alone.

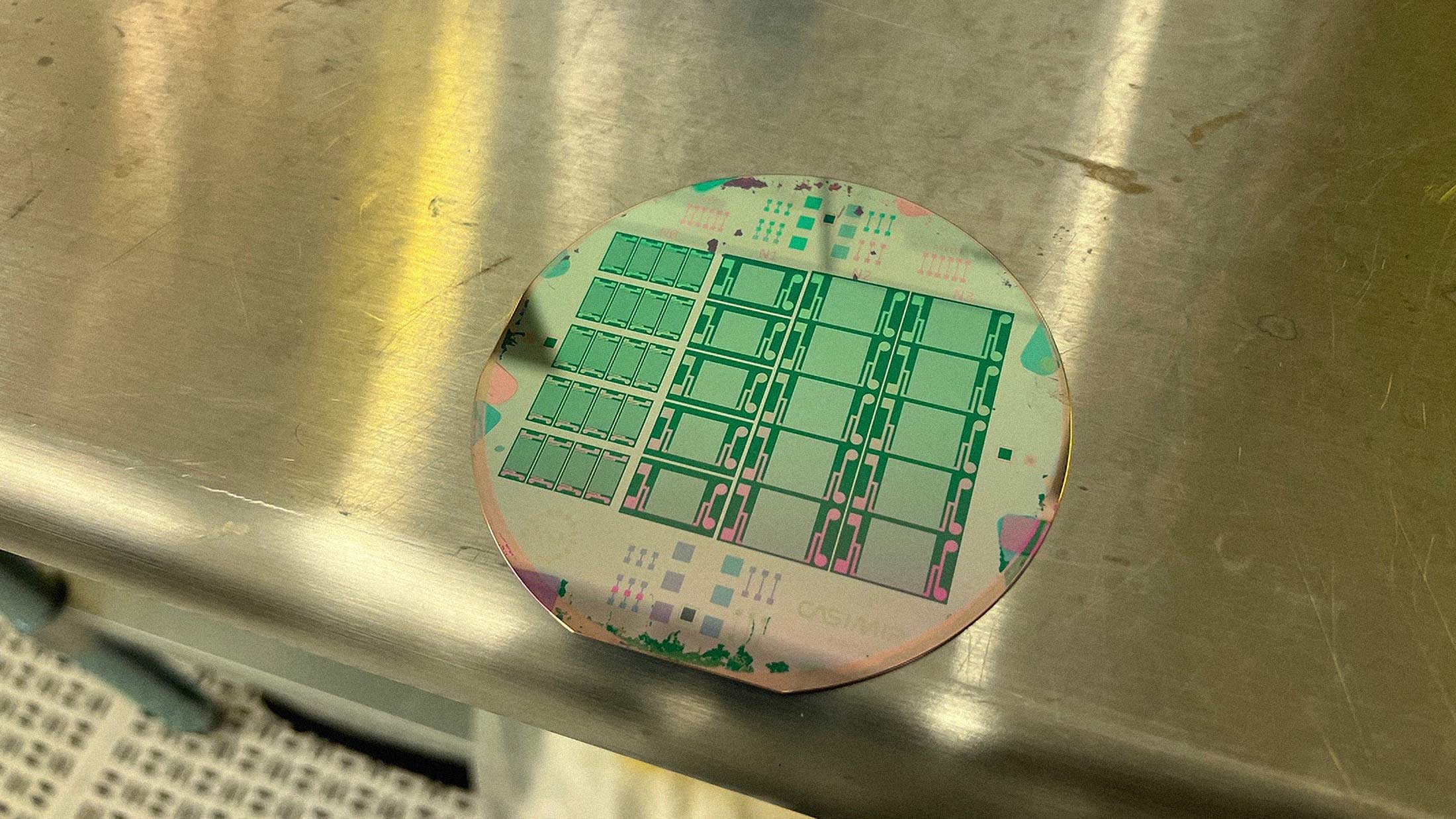

HBM manufacturing is far more complex, resource-intensive, and has lower yields per wafer compared to standard DRAM. As factories pivot to making HBM, they produce a much lower total volume of memory bits from the same amount of silicon. A single silicon wafer provides 3x as much commodity DRAM as HBM.

This creates a zero-sum game. Every wafer allocated to an HBM stack for an Nvidia GPU is a wafer denied to the LPDDR5X module of a mid-range smartphone or the SSD of a consumer laptop.

Intelligence Sits on Top

Artificial intelligence is the layer that consumes everything below it. Training large models requires thousands of GPUs running for weeks. Inference requires continuous compute at scale. Both demand energy, processing power, and memory in unprecedented quantities.

But intelligence is also what everything else now depends on. AI agents are emerging as economic actors. Autonomous AI agents are now capable of reasoning, planning, and executing multi-step tasks across the internet. They can research products, compare prices, negotiate terms, and allocate capital.

The dependency runs both ways. Intelligence requires compute. But increasingly, economic activity requires intelligence.

Crypto as the Agent Economy's Payment Layer

Here is where cryptocurrency re-enters the picture. AI agents cannot open bank accounts. They have no government-issued ID, no social security number, no way to satisfy Know Your Customer requirements at a traditional financial institution.

An agent that holds a non-custodial AI wallet can send and receive value, execute transactions against smart contracts, and interact with decentralized protocols, all without needing permission from a bank or payment processor. Coinbase CEO Brian Armstrong predicted that very soon there will be more AI agents than humans making transactions on the internet. Binance founder Changpeng Zhao said AI agents will make one million times more payments than people, all in crypto.

Mastercard is rolling out Agent Pay and Verifiable Intent to support trusted, network-backed payments for autonomous AI agents on leading open agent platforms. Coinbase's Agentic Market, powered by USDC and x402, has already processed nearly 165 million transactions across over 480,000 AI agents.

Stablecoins solve the volatility problem. For an AI agent executing hundreds of stablecoin micropayments per hour, a 5% price swing in the underlying currency would be catastrophic. Dollar-pegged tokens provide programmability without the instability.

The Quantum Wildcard

Quantum computing threatens to scramble this entire dependency stack. Quantum computers could use Shor's algorithm to factor large integers and compute discrete logarithms efficiently. That directly compromises RSA, elliptic-curve cryptography (ECC), and Diffie-Hellman. Once a cryptanalytically relevant quantum computer exists, the public keys protecting encrypted traffic, digital signatures, and authentication mechanisms could all be exposed.

Q-Day has been pulled forward significantly from typical 2035+ timelines. Cloudflare announced it is moving its target for full post-quantum security to 2029.

We have gone from planning for a threat two decades out to one that overlaps with systems actively being deployed and funded. For digital assets like cryptocurrency, the implications are immediate because the private key encryption underpinning billions of dollars on the blockchain was never designed to withstand attacks from a quantum computer.

Migrating a live blockchain to post-quantum standards is a different problem entirely from upgrading a centralized system. You are dealing with immutable ledgers, billions in locked liquidity, and decentralized governance that cannot mandate a coordinated upgrade.

The System Is One Thing Now

The technology economy used to be a collection of adjacent industries. Energy served utilities. Chips went into phones. Memory was a component. AI was a research project. Crypto was speculation.

Now they are linked. The five largest hyperscalers have collectively committed more than $660 billion in 2026 capital expenditures. AI-optimized data center facilities now require 100-500 megawatts, enough to power entire cities. Power has become the competitive moat. New nuclear designs target data centers directly.

Understanding any one of these markets now requires understanding all of them. The companies that win will be the ones that see the stack clearly and secure capacity at every layer. The ones that lose will be the ones still treating energy, chips, memory, and intelligence as separate procurement problems.