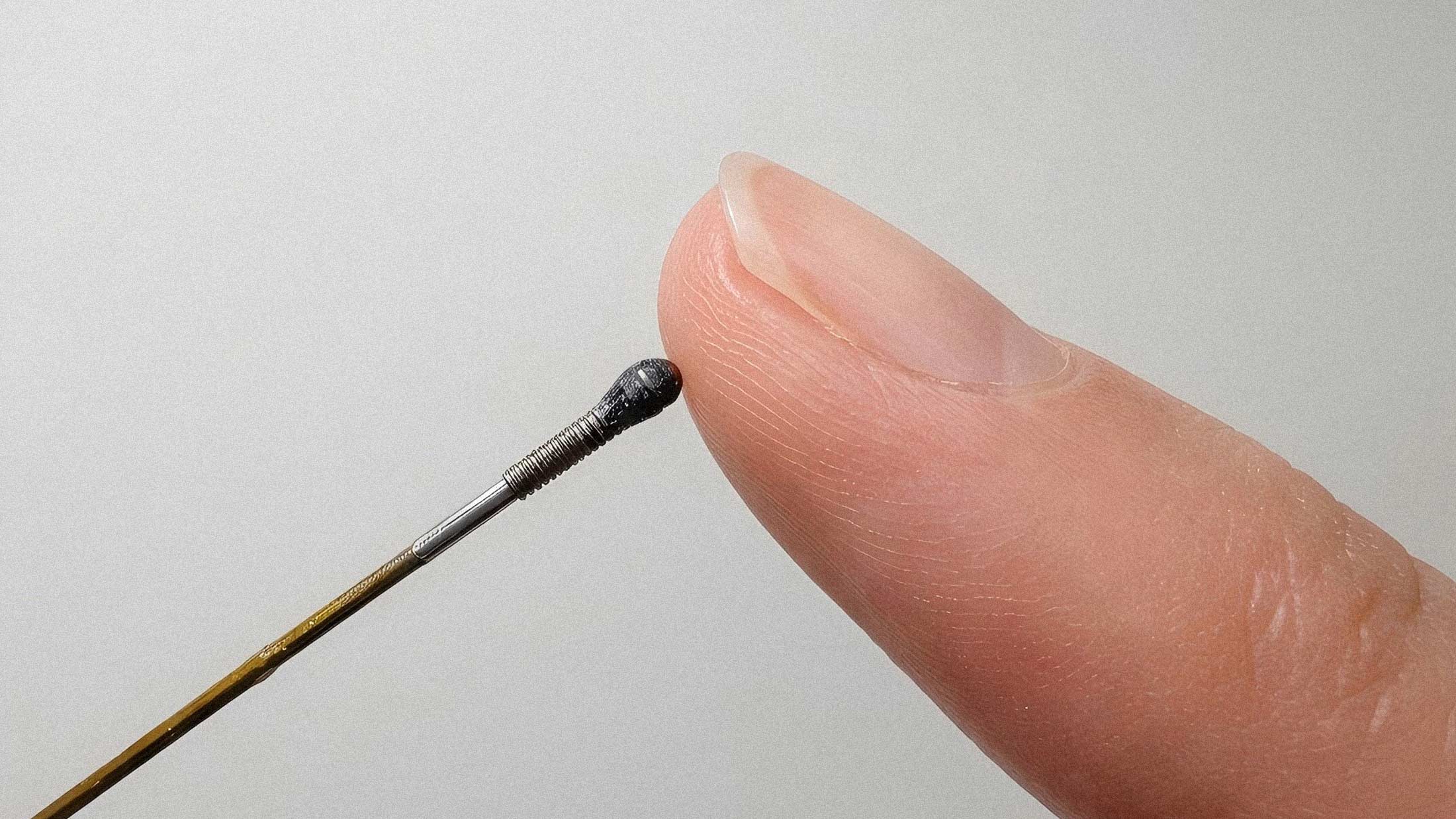

Researchers at Shanghai Jiao Tong University have built an optical force sensor measuring just 1.7 millimeters that can detect forces and torques across all six spatial axes. The device, which uses light instead of conventional electronic signals, represents a significant step forward for tactile sensing in robotics and minimally invasive surgery.

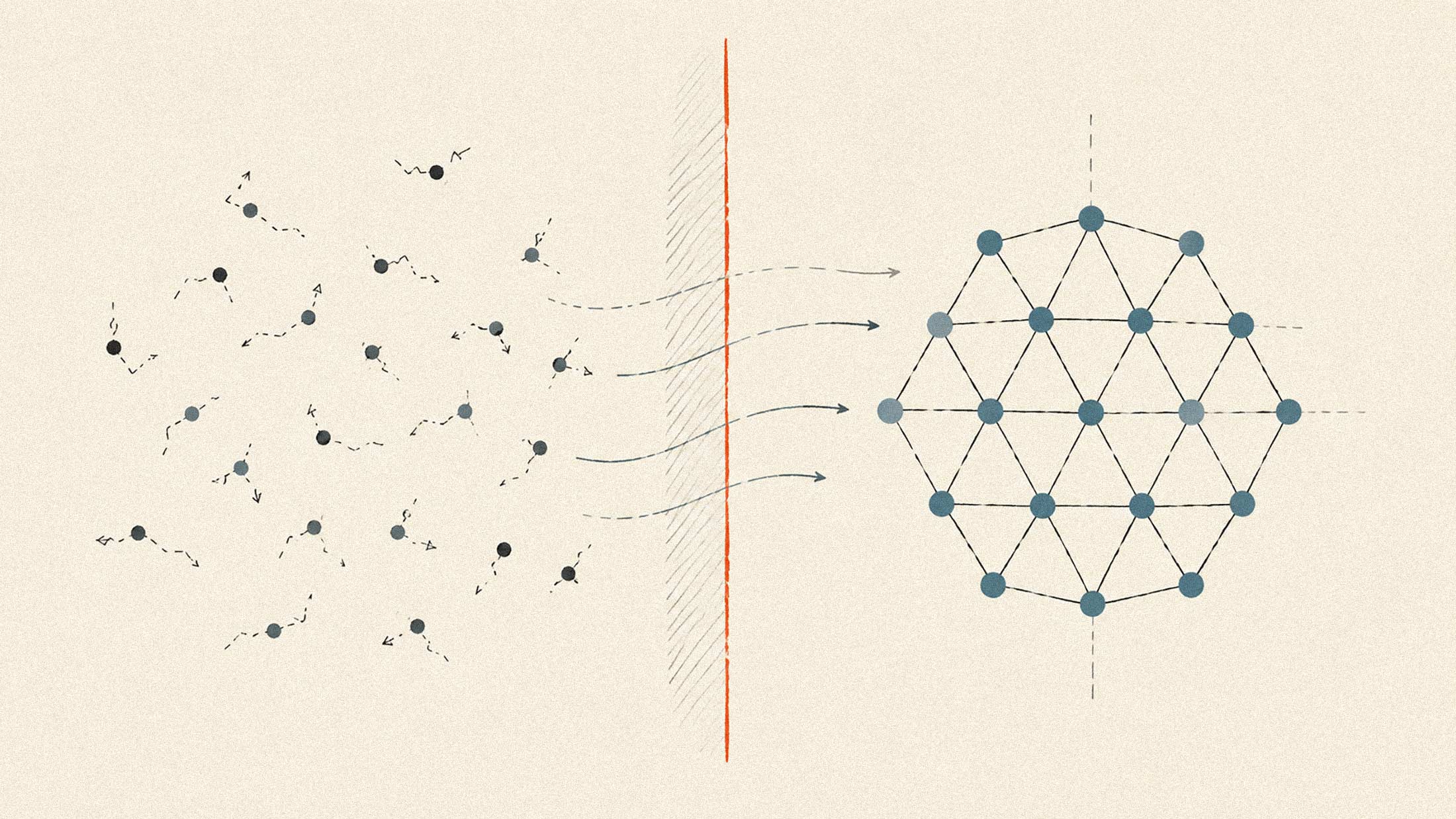

The sensor works by embedding a deformable elastomer tip into an optical cavity attached to a fiber bundle. When the tip contacts an object, even slight deformations change the light patterns inside the cavity. A coherent fiber bundle transmits these changes as images to a camera, where data-driven algorithms interpret the force and torque states acting on the sensor. The approach differs fundamentally from conventional six-axis sensors, which typically rely on strain gauges or distributed sensing elements that are difficult to miniaturize.

Why Optical Matters

The design operates through a single optical channel rather than requiring multiple discrete sensing components. This simplification reduces fabrication complexity and eliminates potential failure points. The coherent fiber bundle preserves spatial information while dramatically cutting wiring requirements. For robotics applications where space is measured in fractions of a millimeter, that distinction matters.

Traditional force sensors face a persistent tradeoff: accuracy demands bulk, and miniaturization sacrifices capability. Camera-based tactile sensors like GelSight offer high spatial resolution but require focal lengths that make them fundamentally large. Strain gauge systems need careful placement of multiple components. The Shanghai Jiao Tong approach sidesteps these constraints by treating the entire contact state holistically rather than measuring individual force components separately.

Surgical Applications

Minimally invasive robotic surgery operates through incisions as small as 10mm, making direct tissue palpation impossible. Surgeons lose the tactile feedback that helps them distinguish healthy tissue from tumors or locate blood vessels. Research has shown that robot-conducted palpation with proper force sensing can reduce maximum applied forces by 35% while improving tumor detection accuracy by 50% compared to manual methods.

The new sensor's size makes it compatible with instruments designed to navigate through narrow anatomical spaces like the eye or vascular channels. The ability to measure not just force magnitude but direction and torque could help surgeons maneuver instruments without causing unintended tissue damage. The researchers also demonstrated the sensor's ability to detect and localize stiff inclusions beneath surfaces using gelatin phantoms, suggesting potential for tactile-guided tumor identification during procedures.

Edge AI Implications

A sensor this small that produces useful multi-axis force data creates interesting possibilities for edge computing in robotics. Current robotic systems often struggle with the latency involved in transmitting high-bandwidth tactile data to centralized processors. Local inference on embedded chips could enable real-time force-responsive control loops.

The sensor's output is fundamentally image-based, which aligns well with the vision-oriented neural network architectures that dominate edge AI accelerators. As compute demands for agentic AI continue climbing, having sensors that produce data in formats optimized for neural inference becomes increasingly valuable.

For industrial robotics, the miniaturization opens applications in tight assembly spaces or delicate manipulation tasks where current six-axis sensors are too bulky. Consumer robotics, including prosthetics and assistive devices, could benefit from fingertip-scale force sensing that approaches the density of human mechanoreceptors.

What Comes Next

The sensor's reliance on imaging means it inherits some limitations of camera-based systems, including processing requirements and potential temporal resolution constraints. The researchers used data-driven algorithms to interpret force states, which implies a calibration and training phase that may not generalize across all use cases.

Still, the fundamental approach of replacing distributed electronic sensing elements with a single optical channel is elegant. It shifts complexity from hardware to software, which tends to favor scalability and cost reduction over time. For robots that need to feel their way through the world at ever-smaller scales, that trade-off looks increasingly attractive.