NVIDIA today released Nemotron 3 Nano Omni, an open multimodal model designed to replace the fragmented pipelines that most AI agent systems currently rely on. The model brings vision, audio, and language capabilities together into a single system, enabling agents to reason across video, audio, image, and text without handing data off between separate models.

The pitch is straightforward: most AI agent systems today juggle separate models for vision, speech, and language, losing time and context as data moves between them. Nemotron 3 Nano Omni consolidates that stack. By combining vision and audio encoders within its 30B-A3B hybrid mixture-of-experts architecture, the model eliminates the need for separate perception models and delivers up to 9x higher throughput than other open omni models with similar interactivity.

Architecture and Efficiency

The model backbone interleaves three components: 23 Mamba selective state-space layers for efficient long-context processing, 23 MoE layers with 128 experts and top-6 routing, and 6 grouped-query attention layers for global interaction. It combines the Nemotron 3 hybrid Mamba-Transformer MoE backbone with a C-RADIOv4-H vision encoder and Parakeet-TDT-0.6B-v2 audio encoder.

Nemotron 3 Nano Omni handles text, images, audio, video, documents, charts, and graphical interfaces as inputs, producing text as output. The model features a 256K context window. It's a 30B-parameter mixture-of-experts model with only 3B active parameters per inference, meaning you get the knowledge capacity of the larger model while paying the inference cost of a much smaller one.

On benchmarks, NVIDIA claims best-in-class accuracy on complex document intelligence leaderboards including MMlongbench-Doc and OCRBenchV2, while also leading in video and audio benchmarks like WorldSense and DailyOmni. Compared to other open omni models at the same interactivity threshold, the model delivers 7.4x higher system efficiency for multi-document use cases and 9.2x higher efficiency for video tasks.

The Agentic Play

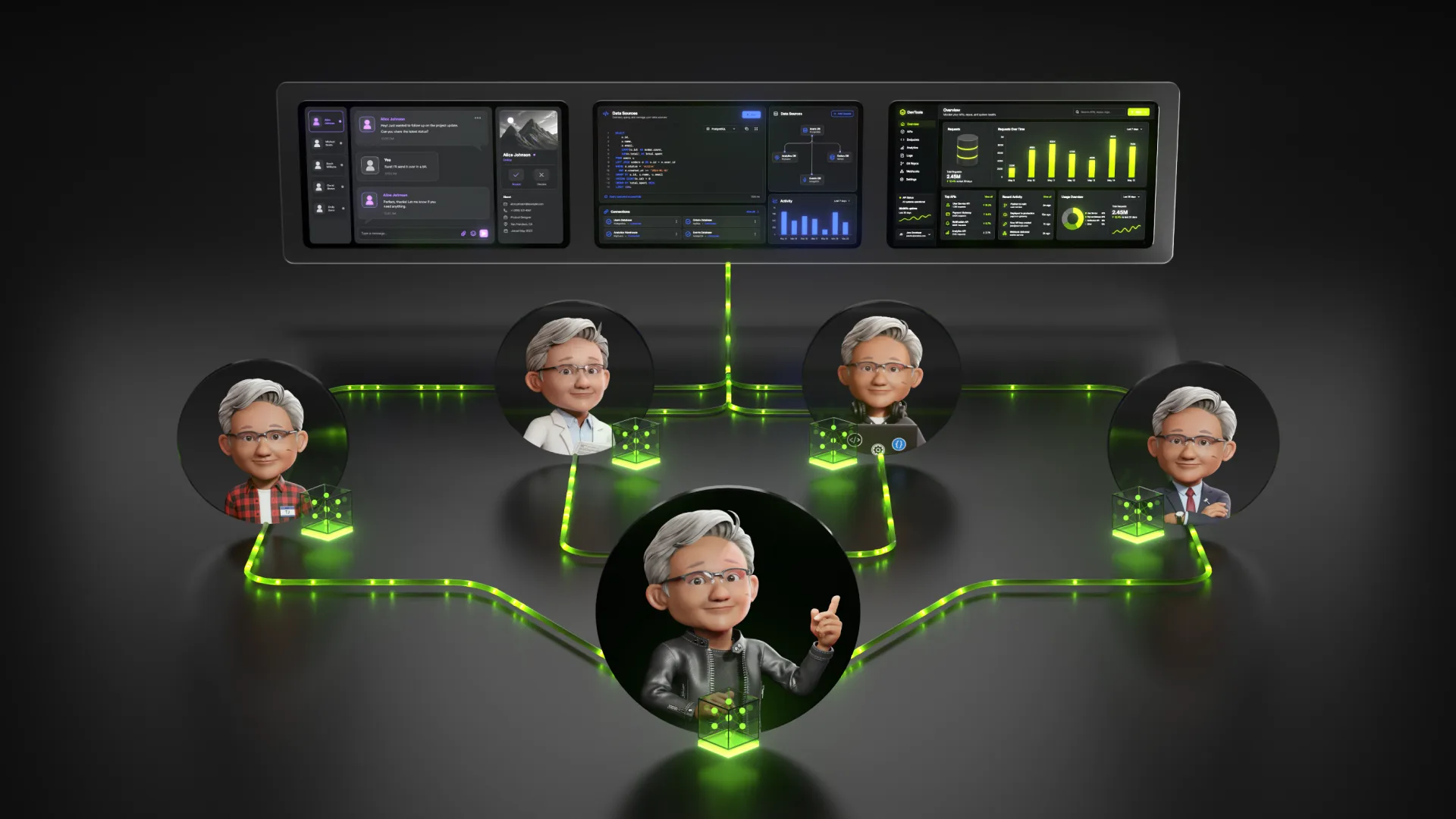

NVIDIA positions Nemotron 3 Nano Omni as the multimodal perception and context sub-agent in larger agent systems. It provides the agent system with eyes and ears: reading screens, interpreting documents, transcribing speech, and analyzing video while maintaining a converged multimodal context across reasoning loops.

This fits into a broader trend of agentic architectures where specialized sub-agents handle discrete tasks. The model is designed to work alongside other NVIDIA Nemotron models like Super and Ultra, or proprietary models from other providers.

H Company is already using the model for its computer-use agents. As CEO Gautier Cloix put it: "To build useful agents, you can't wait seconds for a model to interpret a screen. By building on Nemotron 3 Nano Omni, our agents can rapidly interpret full HD screen recordings."

Availability and Adoption

The model is available today via Hugging Face, OpenRouter, build.nvidia.com, and more than 25 partner platforms. NVIDIA released it with open weights, datasets, and training techniques. As an open, lightweight model, it's designed for deployment on local hardware including NVIDIA DGX Spark.

Companies already adopting the model include Aible, Applied Scientific Intelligence, Eka Care, Foxconn, H Company, Palantir, and Pyler, with Dell Technologies, DocuSign, Infosys, Oracle, and others evaluating it.

The Nemotron 3 family has seen over 50 million downloads in the past year. This release extends the family into multimodal and agentic territory, and represents NVIDIA's latest move to complement its hardware dominance with an open model ecosystem. For developers building agentic applications, the value proposition is clear: collapse the inference hops, simplify orchestration, and keep the context intact.