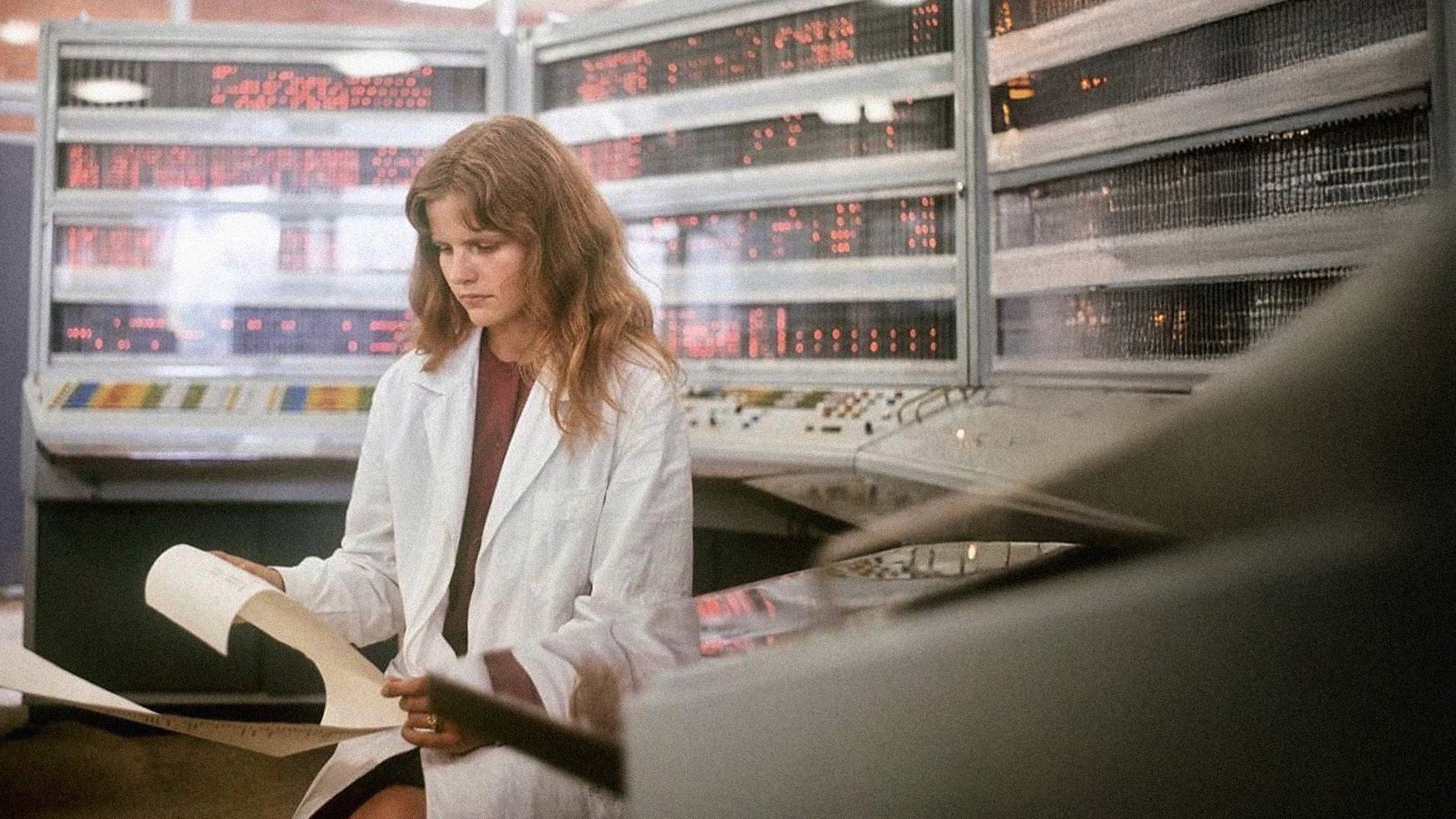

In 1968, Soviet engineers powered up the BESM-6, a machine that consumed 30 kilowatts, occupied up to 200 square meters of floor space, and contained roughly 60,000 transistors and 180,000 semiconductor diodes. It was, by the standards of its day, a marvel. And it did something remarkable: it processed telemetry data from the 1975 Apollo-Soyuz mission faster than NASA's computers could.

A Computer That Ran for Two Decades

The BESM-6 (БЭСМ-6, roughly translating to "High-Speed Electronic Computing Machine") was designed under the leadership of Sergei Lebedev at the Institute of Precision Mechanics and Computer Engineering. Production ran from 1968 to 1987, with 355 units built. For a Soviet machine, that represented an unusually large installed base, and it attracted a dedicated developer community that built multiple operating systems and compilers for Fortran, ALGOL, and Pascal.

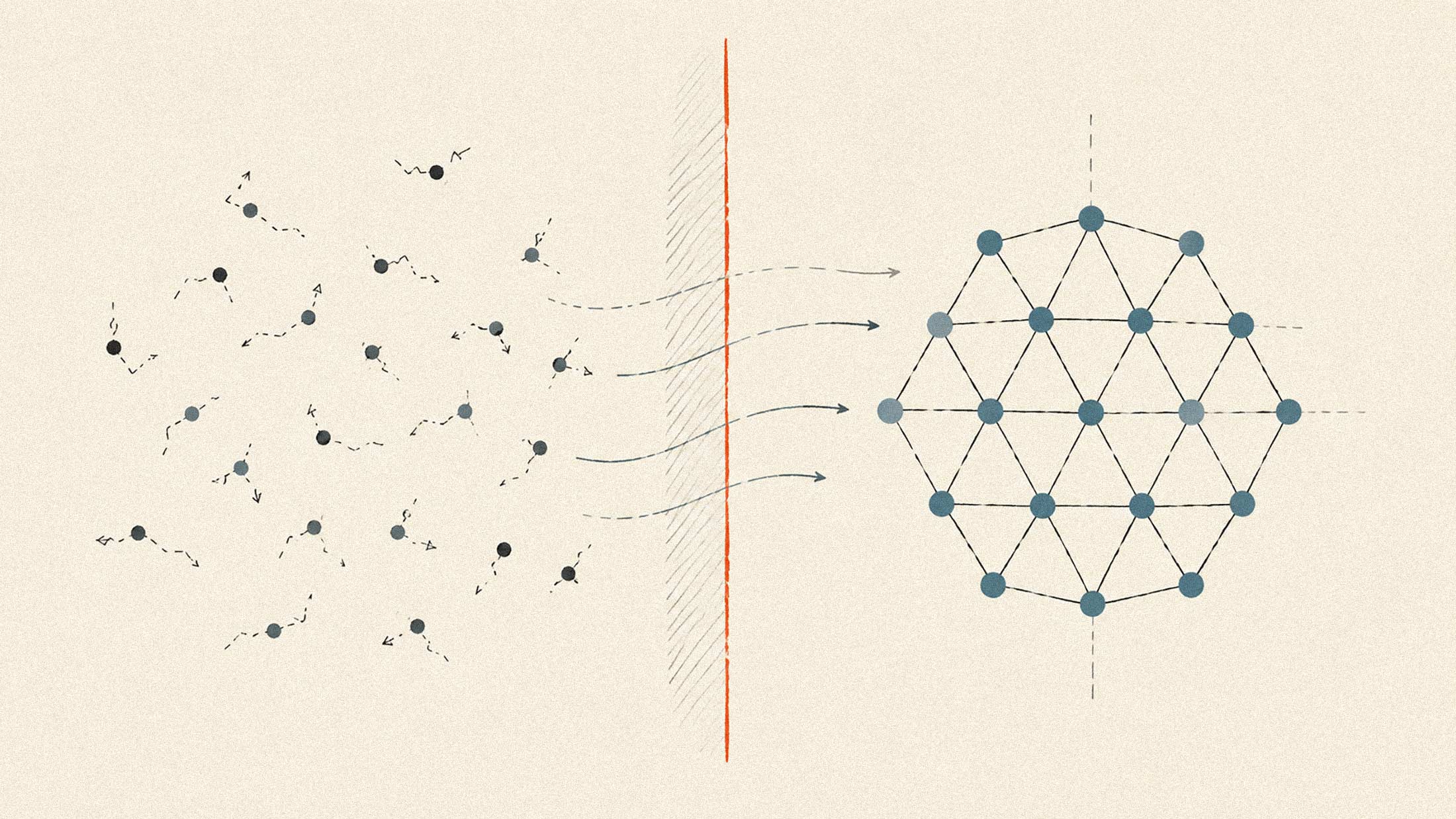

What made the machine special was its architecture. Lebedev implemented what he called the "Principle of Water Pipe," a technique we now recognize as pipeline processing. The machine could execute up to 14 instructions simultaneously at different stages. It featured associative memory on fast registers, essentially an early cache, and supported virtual memory addressing and multitasking. Peak performance reached one million instructions per second.

At its introduction, only Control Data Corporation's machines in the United States could outrun it. The CDC 6600, generally considered the first successful supercomputer, hit roughly 3 megaFLOPS. The BESM-6 was not far behind, and it crushed the average IBM mainframe of the era.

What They Actually Did

These machines were deployed for tasks that sound familiar: weather forecasting, missile defense calculations, satellite telemetry processing, nuclear simulations, and scientific research. Soviet meteorologists used BESM-4 and BESM-6 systems for numerical weather prediction by the 1970s. The machines powered the A-35 anti-ballistic missile defense system computations. Centers of collective use were established to run real-time control systems and coordinate data teleprocessing.

In the West, the picture was similar. CDC 6600s went to Los Alamos, Livermore, CERN, and university computing labs. IBM's System/360 family, introduced in 1964, was designed to handle both commercial and scientific applications, offering a 50-fold performance range across six processor models. These machines processed airline reservations, ran census calculations, and enabled the early financial systems that would eventually become the infrastructure banks still use today.

The Rhyme of History

Today's AI data centers are filling the exact same functional role, scaled by orders of magnitude. Microsoft's Wisconsin AI datacenter is designed as a single massive supercomputer, interconnecting hundreds of thousands of NVIDIA GPUs. According to the company, it will deliver 10 times the performance of the world's fastest conventional supercomputer. Amazon's Project Rainier facility in Indiana spans seven data centers (with plans for 30), will consume 2.2 gigawatts of electricity, and is specifically designed for training and running machine learning models.

The parallels are almost comical. The BESM-6 consumed 30 kilowatts. A modern AI data center uses 60+ kilowatts per rack. The Soviet machine occupied 200 square meters; Microsoft's storage systems alone span five football fields. The BESM-6 ran 24/7 in computing centers because organizations couldn't afford frequent hardware replacement. Today's GPU clusters run continuously for essentially the same reason: the hardware is too expensive and too scarce to sit idle.

The business case hasn't changed much either. The BESM-6 enabled institutions to process complex simulations, coordinate distributed systems, and make predictions about physical systems. That's precisely what AI infrastructure does now. The scale is incomprehensible by 1968 standards, but the organizational logic remains: centralize computational resources, run them at maximum utilization, and pipe in problems that exceed human processing capacity.

COBOL, the language that powered many early mainframe applications, still runs 43% of banking systems according to Reuters. The BESM-6 stayed in active use until the mid-1980s because its successors were delayed. The machines we build today will likely exhibit the same longevity once the current infrastructure buildout slows.